This is the full developer documentation for Algorand Developer Portal

# Algorand Developer Portal

> Everything you need to build solutions powered by the Algorand blockchain network.

Start your journey today

## Become an Algorand Developer

Follow our quick start guide to install Algorand’s developer toolkit and go from zero to deploying your "Hello, world" smart contract in mere minutes using TypeScript or Python pathways.

[Install AlgoKit](getting-started/algokit-quick-start)

### [AlgoKit code tutorials](https://tutorials.dev.algorand.co)

[Step-by-step introduction to Algorand through Utils TypeScript.](https://tutorials.dev.algorand.co)

### [Example gallery](https://examples.dev.algorand.co)

[Explore and launch batteries-included example apps.](https://examples.dev.algorand.co)

### [Connect in Discord](https://discord.gg/algorand)

[Meet other devs and get code support from the community.](https://discord.gg/algorand)

### [Contact the Foundation](https://algorand.co/algorand-foundation/contact)

[Reach out to the team directly with technical inquiries.](https://algorand.co/algorand-foundation/contact)

Join the network

## Run an Algorand node

[Install your node](/nodes/overview/)

Join the Algorand network with a validator node using accessible commodity hardware in a matter of minutes. Experience how easy it is to become a node-runner so you can participate in staking rewards, validate blocks, submit transactions, and read chain data.

# Intro to AlgoKit

AlgoKit is a comprehensive software development kit designed to streamline and accelerate the process of building decentralized applications on the Algorand blockchain. At its core, AlgoKit features a powerful command-line interface (CLI) tool that provides developers with an array of functionalities to simplify blockchain development. Along with the CLI, AlgoKit offers a suite of libraries, templates, and tools that facilitate rapid prototyping and deployment of secure, scalable, and efficient applications. Whether you’re a seasoned blockchain developer or new to the ecosystem, AlgoKit offers everything you need to harness the full potential of Algorand’s impressive tech and innovative consensus algorithm.

[Introduction to AlgoKit](https://www.youtube.com/embed/pojEI-8h0lg?rel=0)

## AlgoKit CLI

[Section titled “AlgoKit CLI”](#algokit-cli)

AlgoKit CLI is a powerful set of command line tools for Algorand developers. Its goal is to help developers build and launch secure, automated, production-ready applications rapidly.

### AlgoKit CLI commands

[Section titled “AlgoKit CLI commands”](#algokit-cli-commands)

Here is the list of commands that you can use with AlgoKit CLI.

* [Bootstrap](/docs/algokit-cli/python/latest/features/project/bootstrap) - Bootstrap AlgoKit project dependencies

* [Compile](/docs/algokit-cli/python/latest/features/compile) - Compile Algorand Python code

* [Completions](/docs/algokit-cli/python/latest/features/completions) - Install shell completions for AlgoKit

* [Deploy](/docs/algokit-cli/python/latest/features/project/deploy) - Deploy your smart contracts effortlessly to various networks

* [Dispenser](/docs/algokit-cli/python/latest/features/dispenser) - Fund your TestNet account with ALGOs from the AlgoKit TestNet Dispenser

* [Doctor](/docs/algokit-cli/python/latest/features/doctor) - Check AlgoKit installation and dependencies

* [Explore](/docs/algokit-cli/python/latest/features/explore) - Explore Algorand Blockchains using lora

* [Generate](/docs/algokit-cli/python/latest/features/generate) - Generate code for an Algorand project

* [Goal](/docs/algokit-cli/python/latest/features/goal) - Run the Algorand goal CLI against the AlgoKit Sandbox

* [Init](/docs/algokit-cli/python/latest/features/init) - Quickly initialize new projects using official Algorand Templates or community provided templates

* [LocalNet](/docs/algokit-cli/python/latest/features/localnet) - Manage a locally sandboxed private Algorand network

* [Project](/docs/algokit-cli/python/latest/features/project) - Perform a variety of AlgoKit project workspace related operations like bootstrapping development environment, deploying smart contracts, running custom commands, and more

* [Task](/docs/algokit-cli/python/latest/features/tasks) - Perform a variety of useful operations like signing & sending transactions, minting ASAs, creating vanity address, and more, on the Algorand blockchain

To learn more about AlgoKit CLI, refer to the following resources:

[AlgoKit CLI Documentation ](/docs/algokit-cli/python/latest/)Learn more about using and configuring AlgoKit CLI

[AlgoKit CLI Repo ](https://github.com/algorandfoundation/algokit-cli)Explore the codebase and contribute to its development

## Algorand Python

[Section titled “Algorand Python”](#algorand-python)

If you are a Python developer, you no longer need to learn a complex smart contract language to write smart contracts.

Algorand Python is a semantically and syntactically compatible, typed Python language that works with standard Python tooling and allows you to write Algorand smart contracts (apps) and logic signatures in Python. Since the code runs on the Algorand virtual machine(AVM), there are limitations and minor differences in behaviors from standard Python, but all code you write with Algorand Python is Python code.

Here is an example of a simple Hello World smart contract written in Algorand Python:

```py

from algopy import ARC4Contract, String, arc4

class HelloWorld(ARC4Contract):

@arc4.abimethod()

def hello(self, name: String) -> String:

return "Hello, " + name + "!"

```

To learn more about Algorand Python, refer to the following resources:

[Algorand Python Documentation ](/concepts/smart-contracts/languages/python/)Learn more about the design and implementation of Algorand Python

[Algorand Python Repo ](https://github.com/algorandfoundation/puya)Explore the codebase and contribute to its development

## Algorand TypeScript

[Section titled “Algorand TypeScript”](#algorand-typescript)

If you are a TypeScript developer, you no longer need to learn a complex smart contract language to write smart contracts.

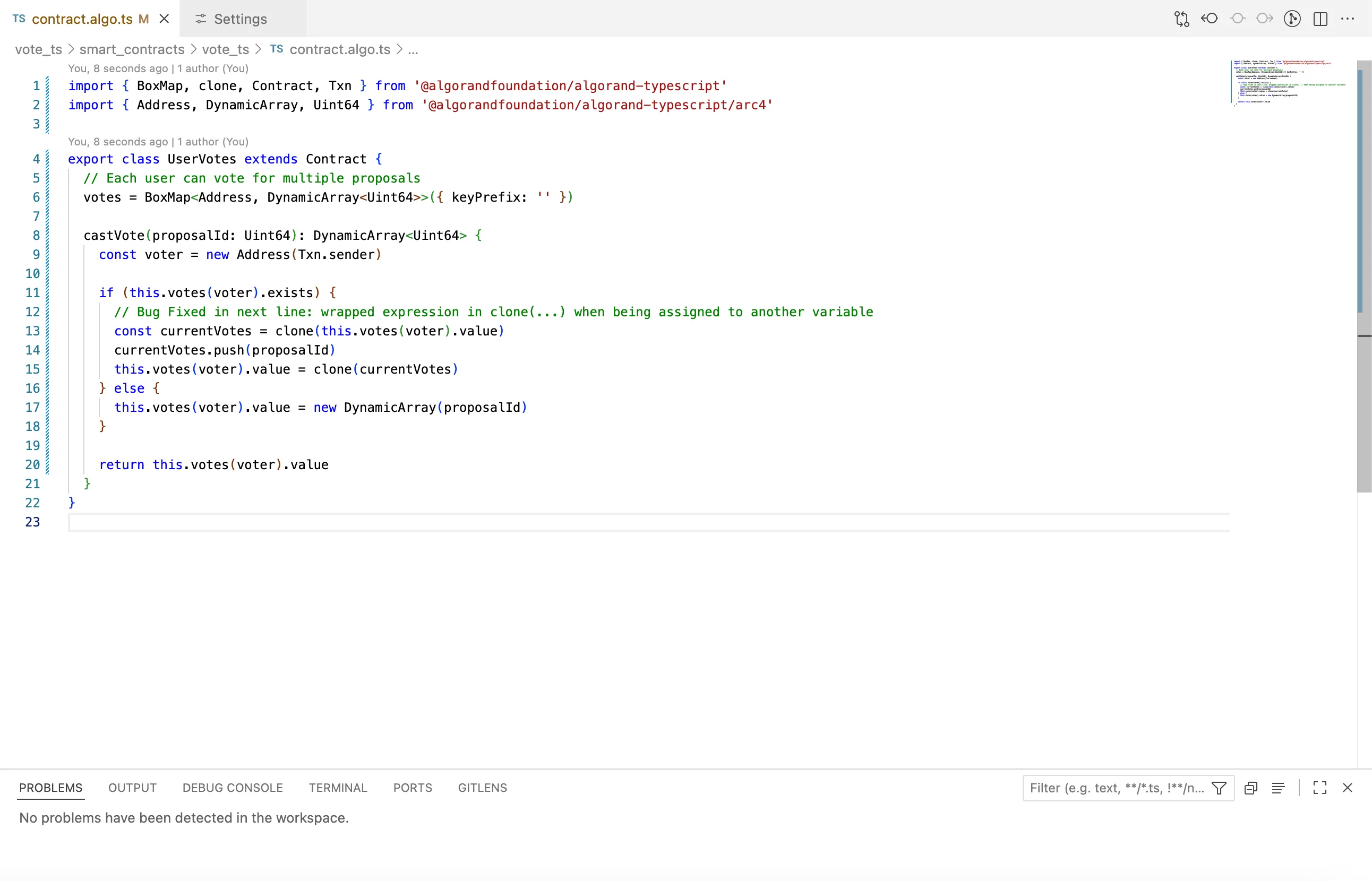

Algorand TypeScript is a semantically and syntactically compatible, typed TypeScript language that works with standard TypeScript tooling and allows you to write Algorand smart contracts (apps) and logic signatures in TypeScript. Since the code runs on the Algorand virtual machine(AVM), there are limitations and minor differences in behaviors from standard TypeScript, but all code you write with Algorand TypeScript is TypeScript code.

Here is an example of a simple Hello World smart contract written in Algorand TypeScript:

```ts

import { Contract } from '@algorandfoundation/algorand-typescript';

export class HelloWorld extends Contract {

hello(name: string): string {

return `Hello, ${name}`;

}

}

```

To learn more about Algorand TypeScript, refer to the following resources:

[Algorand TypeScript Documentation ](/concepts/smart-contracts/languages/typescript/)Learn more about the design and implementation of Algorand TypeScript

[Algorand TypeScript Repo ](https://github.com/algorandfoundation/puya-ts)Explore the codebase and contribute to its development

## AlgoKit Utils

[Section titled “AlgoKit Utils”](#algokit-utils)

AlgoKit Utils is a utility library recommended for you to use for all chain interactions like sending transactions, creating tokens(ASAs), calling smart contracts, and reading blockchain records. The goal of this library is to provide intuitive, productive utility functions that make it easier, quicker, and safer to build applications on Algorand. Largely, these functions wrap the underlying Algorand SDK but provide a higher-level interface with sensible defaults and capabilities for common tasks.

AlgoKit Utils is available in TypeScript and Python.

### Capabilities

[Section titled “Capabilities”](#capabilities)

The library helps you interact with and develop against the Algorand blockchain with a series of end-to-end capabilities as described below:

* [**AlgorandClient**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/algorand-client.md) - The key entrypoint to the AlgoKit Utils functionality

* Core capabilities

* [**Client management**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/client.md) - Creation of (auto-retry) algod, indexer and kmd clients against various networks resolved from environment or specified configuration

* [**Account management**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/account.md) - Creation and use of accounts including mnemonic, rekeyed, multisig, transaction signer ([useWallet](https://github.com/TxnLab/use-wallet) for dApps and Atomic Transaction Composer compatible signers), idempotent KMD accounts and environment variable injected

* [**Algo amount handling**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/amount.md) - Reliable and terse specification of microAlgo and Algo amounts and conversion between them

* [**Transaction management**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/transaction.md) - Ability to send single, grouped or Atomic Transaction Composer transactions with consistent and highly configurable semantics, including configurable control of transaction notes (including ARC-0002), logging, fees, multiple sender account types, and sending behavior

* Higher-order use cases

* [**App management**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app.md) - Creation, updating, deleting, calling (ABI and otherwise) smart contract apps and the metadata associated with them (including state and boxes)

* [**App deployment**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-deploy.md) - Idempotent (safely retryable) deployment of an app, including deploy-time immutability and permanence control and TEAL template substitution

* [**ARC-0032 Application Spec client**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-client.md) - Builds on top of the App management and App deployment capabilities to provide a high productivity application client that works with ARC-0032 application spec defined smart contracts (e.g. via Beaker)

* [**Algo transfers**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/transfer.md) - Ability to easily initiate algo transfers between accounts, including dispenser management and idempotent account funding

* [**Automated testing**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/testing.md) - Terse, robust automated testing primitives that work across any testing framework (including jest and vitest) to facilitate fixture management, quickly generating isolated and funded test accounts, transaction logging, indexer wait management and log capture

* [**Indexer lookups / searching**](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/indexer.md) - Type-safe indexer API wrappers (no more `Record` pain), including automatic pagination control

To learn more about AlgoKit Utils, refer to the following resources:

[Algorand Utils Typescript Documentation ](/docs/algokit-utils/typescript/latest/)Learn more about the design and implementation of Algorand Utils

[Algorand Utils Typescript Repo ](https://github.com/algorandfoundation/algokit-utils-ts#algokit-typescript-utilities)Explore the codebase and contribute to its development

[Algorand Utils Python Documentation ](/docs/algokit-utils/python/latest/)Learn more about the design and implementation of Algorand Utils

[Algorand Utils Python Repo ](https://github.com/algorandfoundation/algokit-utils-py#readme)Explore the codebase and contribute to its development

[Introduction to Algokit Utils](https://www.youtube.com/embed/AkUj1GgcMig?rel=0)

## AlgoKit LocalNet

[Section titled “AlgoKit LocalNet”](#algokit-localnet)

The AlgoKit LocalNet feature allows you to manage (start, stop, reset, manage) a locally sandboxed private Algorand network. This allows you to interact with and deploy changes against your own Algorand network without needing to worry about funding TestNet accounts, whether the information you submit is publicly visible, or whether you are connected to an active Internet connection (once the network has been started).

AlgoKit LocalNet uses Docker images optimized for a great developer experience. This means the Docker images are small and start fast. It also means that features suited to developers are enabled, such as KMD (so you can programmatically get faucet private keys).

To learn more about AlgoKit Localnet, refer to the following resources:

[AlgoKit Localnet Documentation ](/docs/algokit-cli/python/latest/features/localnet)Learn more about using and configuring AlgoKit Localnet

[AlgoKit Localnet GitHub Repository ](https://github.com/algorandfoundation/algokit-cli/blob/main/docs/features/localnet.md)Explore the source code and technical implementation details

## AVM Debugger

[Section titled “AVM Debugger”](#avm-debugger)

The AlgoKit AVM VS Code debugger extension provides a convenient way to debug any Algorand Smart Contracts written in TEAL.

To learn more about the AVM debugger, refer to the following resources:

[AVM Debugger Documentation ](/algokit/avm-debugger)Learn more about using and configuring the AVM Debugger

[AVM Debugger Extension Repo ](https://marketplace.visualstudio.com/items?itemName=AlgorandFoundation.algokit-avm-vscode-debugger)Explore the AVM Debugger codebase and contribute to its development

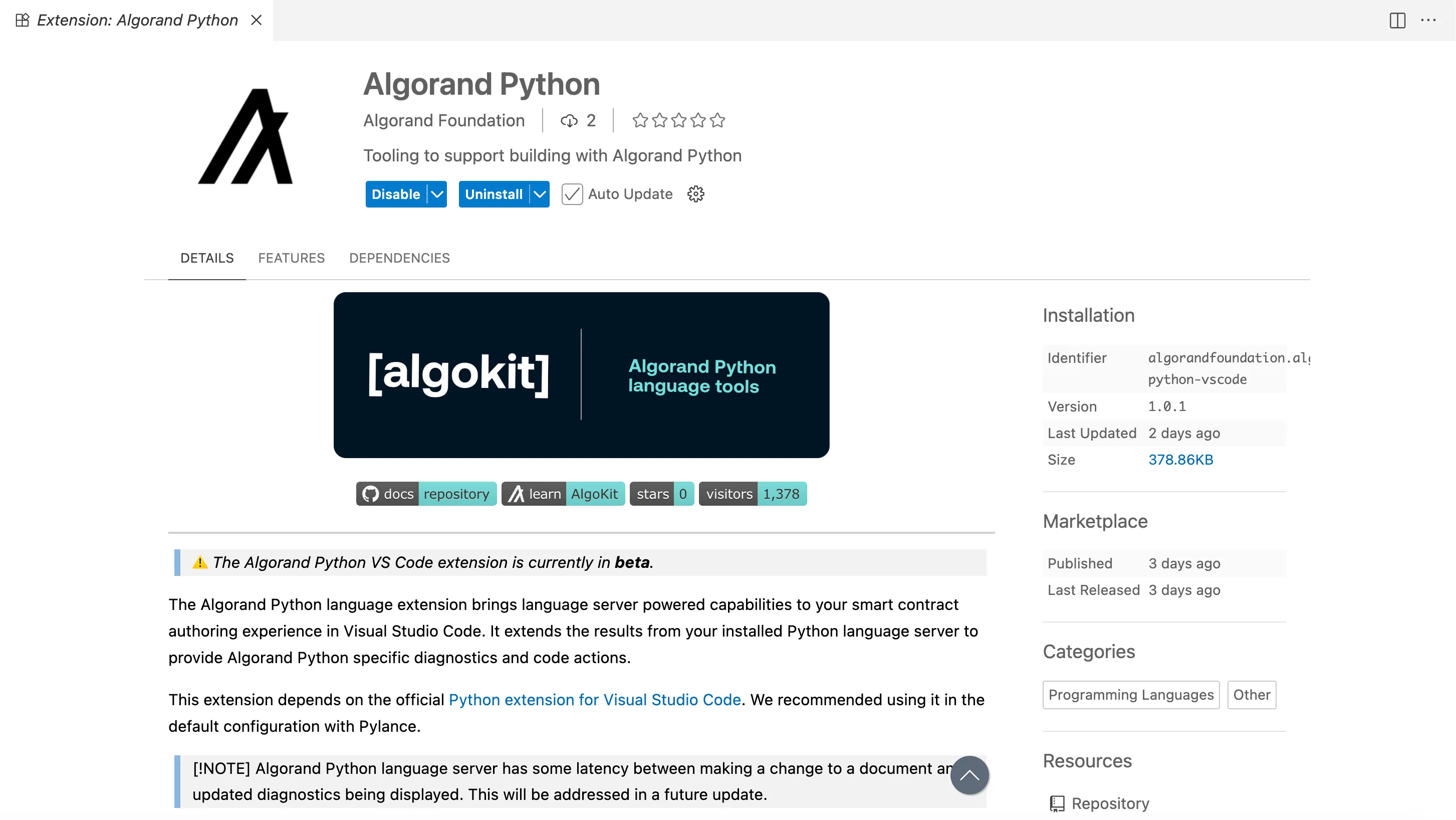

## Language Servers

[Section titled “Language Servers”](#language-servers)

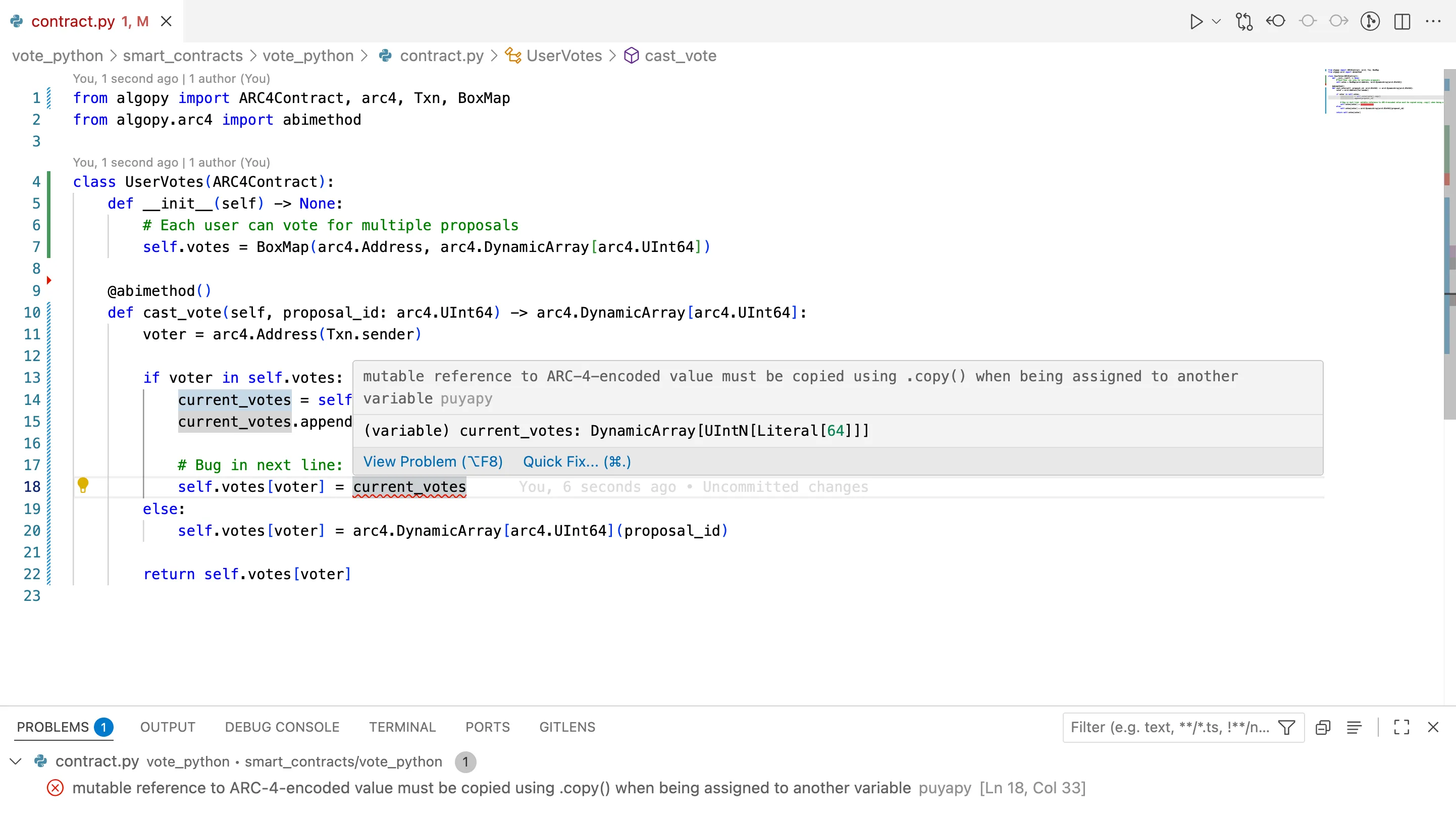

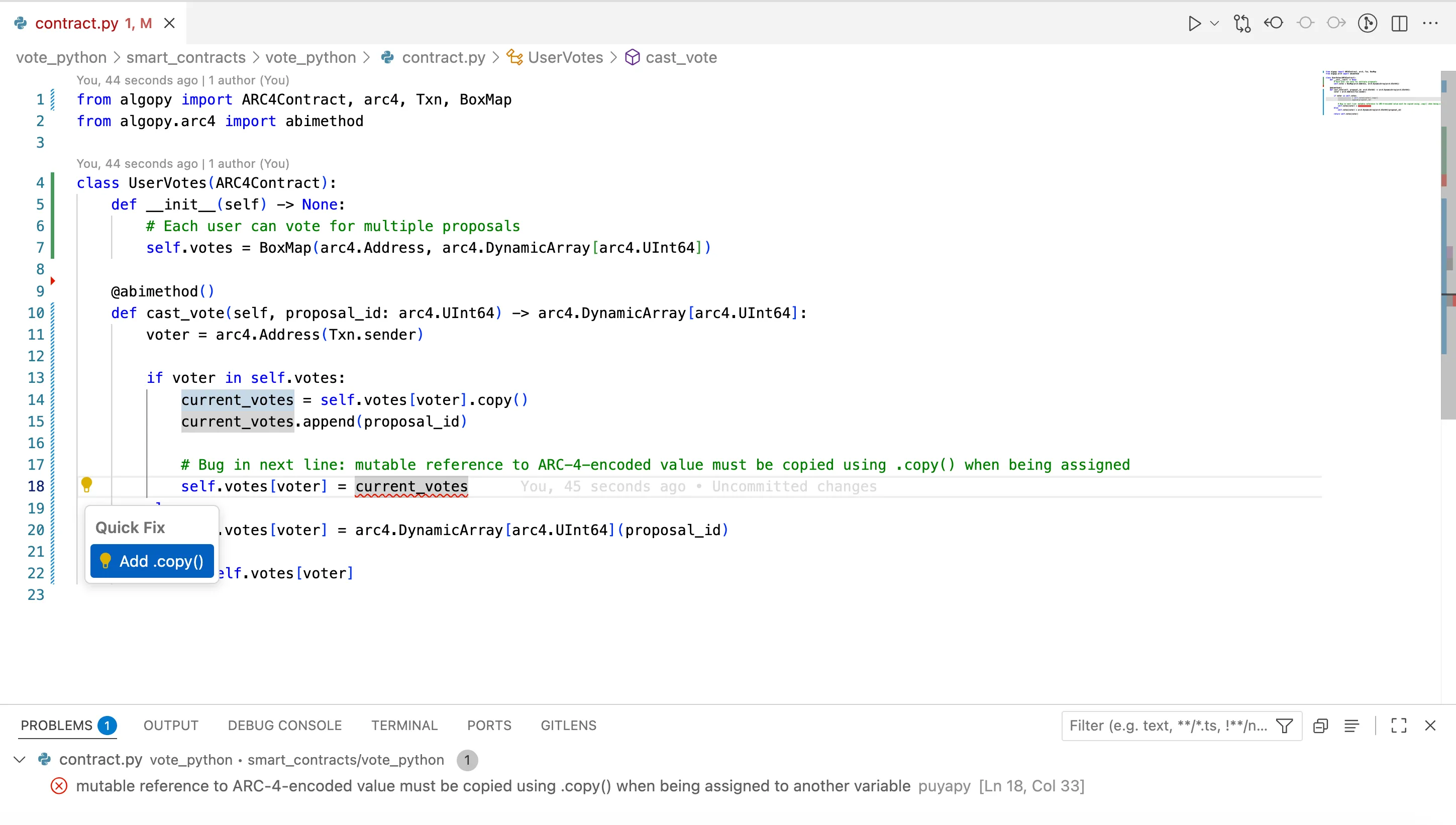

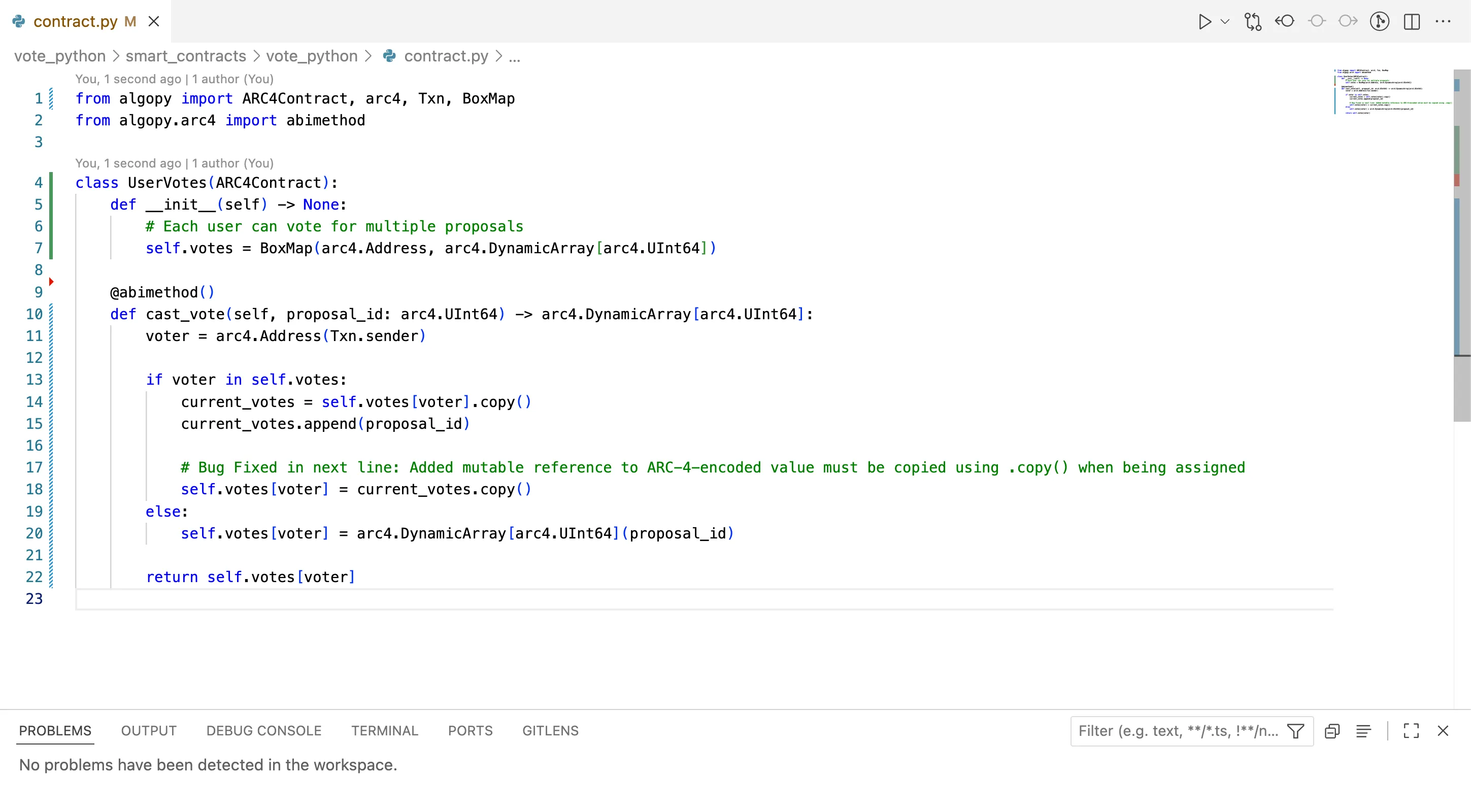

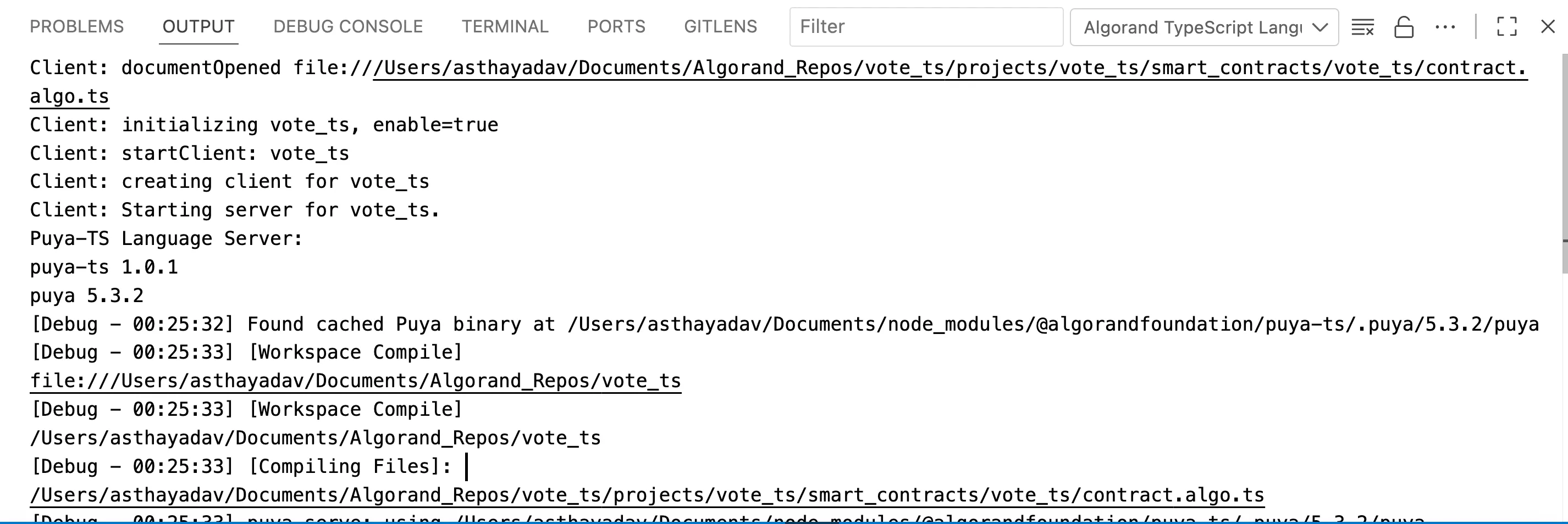

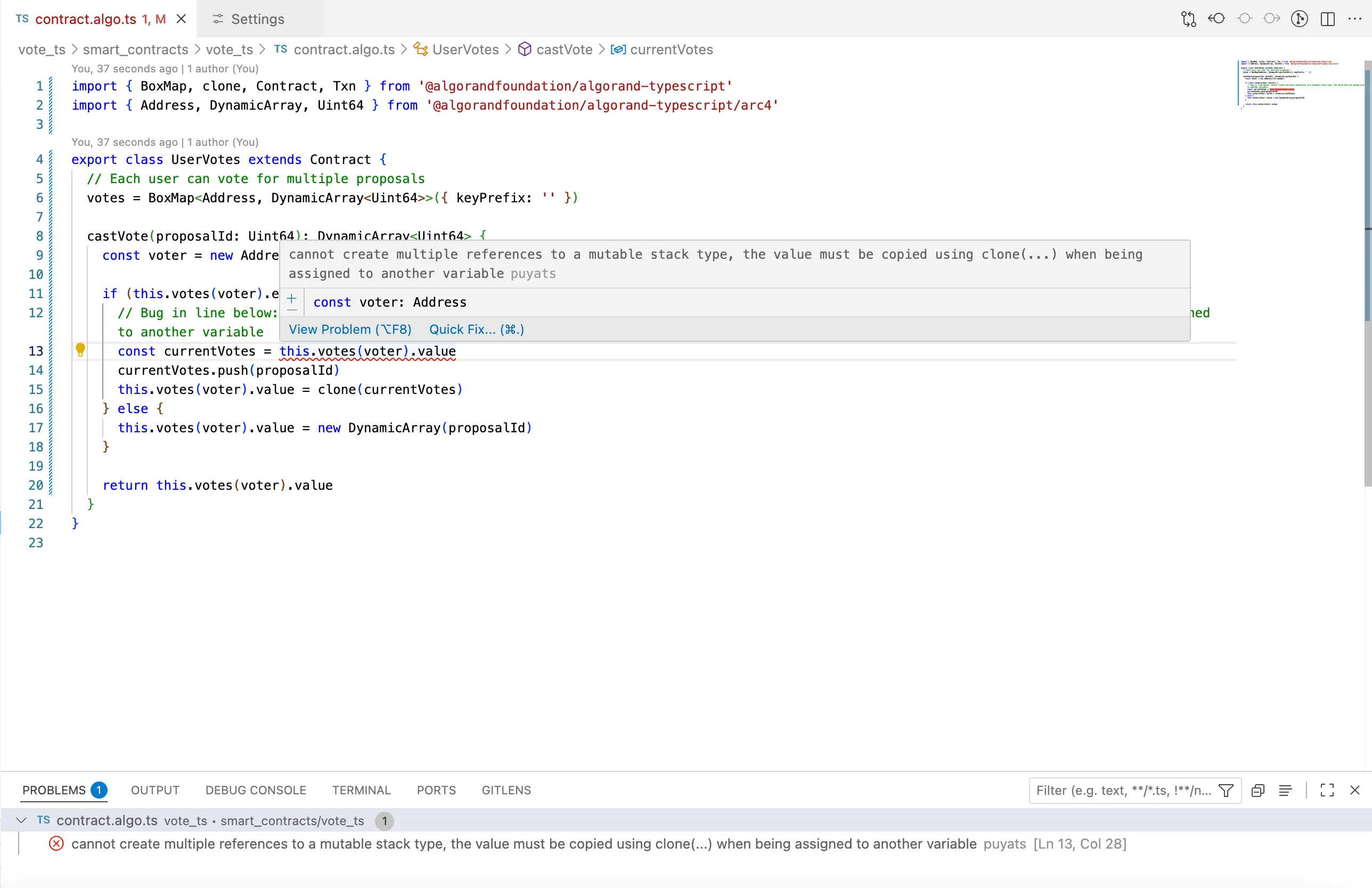

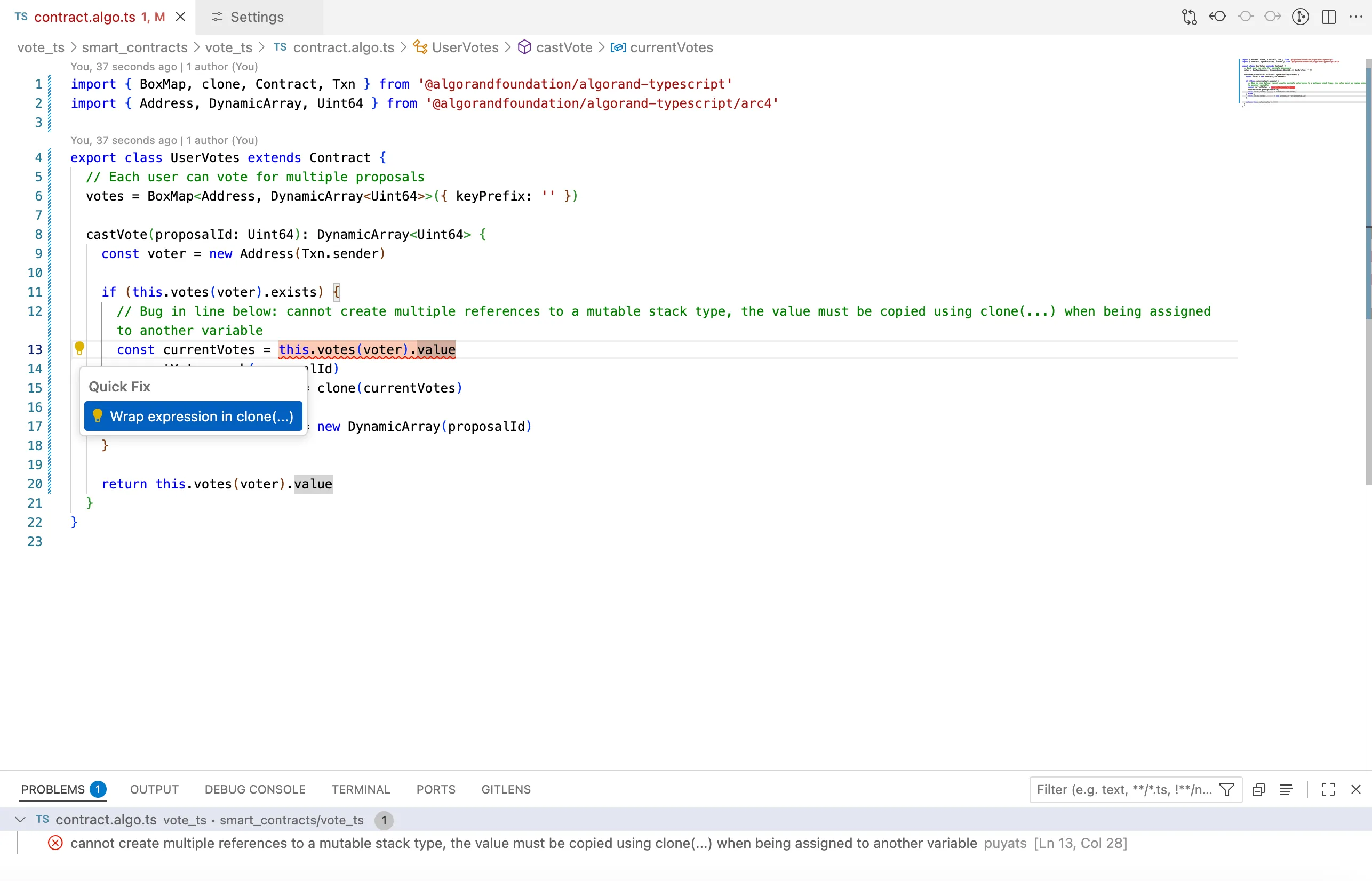

The Algorand VS Code Language Extensions provide developers with enhanced capabilities to build Algorand smart contracts efficiently within Visual Studio Code. Designed to work alongside the standard Python and TypeScript language servers, these extensions extend core IDE functionality by adding Algorand-specific diagnostics, validation, and intelligent code actions. The [Python extension](https://marketplace.visualstudio.com/items?itemName=AlgorandFoundation.algorand-python-vscode) integrates seamlessly with the official Python extension and automatically detects the PuyaPy environment to offer real-time contract-aware analysis and quick fixes, helping developers catch errors early and improve code quality. Similarly, the [TypeScript extension](https://marketplace.visualstudio.com/items?itemName=AlgorandFoundation.algorand-typescript-vscode) supports Algorand’s specialized TypeScript and smart contract utilities, providing targeted diagnostics and validation in a familiar developer workflow. Both extensions simplify the development process by offering immediate feedback relevant to Algorand’s unique blockchain environment, accelerating learning and reducing common mistakes. Currently in beta, they require Visual Studio Code 1.80.0 or later and are designed to complement existing language tooling, making them essential tools for any developer working on Algorand smart contracts.

[Algokit TypeScript Language Server ](/algokit/language-servers/algorand-typescript)Learn more about the TypeScript Language Server

[Algokit Python Langauge Server ](/algokit/language-servers/algorand-python)Learn more about the Python Language Server

## Client Generator

[Section titled “Client Generator”](#client-generator)

The client generator generates a type-safe smart contract client for the Algorand Blockchain that wraps the application client in AlgoKit Utils and tailors it to a specific smart contract. It does this by reading an ARC-0032 application spec file and generating a client that exposes methods for each ABI method in the target smart contract, along with helpers to create, update, and delete the application.

To learn more about the client generator, refer to the following resources:

[Client Generator TypeScript Documentation ](/algokit/client-generator/typescript)Learn more about the TypeScript client generator for Algorand smart contracts

[Client Generator TypeScript Repo ](https://github.com/algorandfoundation/algokit-client-generator-ts)Explore the TypeScript client generator codebase and contribute to its development

[Client Generator Python Documentation ](/algokit/client-generator/python)Learn more about the Python client generator for Algorand smart contracts

[Client Generator Python Repo ](https://github.com/algorandfoundation/algokit-client-generator-py)Explore the Python client generator codebase and contribute to its development

## Testnet Dispenser

[Section titled “Testnet Dispenser”](#testnet-dispenser)

The AlgoKit TestNet Dispenser API provides functionalities to interact with the Dispenser service. This service enables users to fund and refund assets.

To learn more about the testnet dispenser, refer to the following resources:

[Testnet Dispenser Documentation ](/docs/algokit-utils/typescript/latest/concepts/advanced/dispenser-client)Learn more about using and configuring the AlgoKit TestNet Dispenser

[Testnet Dispenser Repo ](https://github.com/algorandfoundation/algokit/blob/main/docs/testnet_api.md)Explore the technical implementation and contribute to its development

## AlgoKit Tools and Versions

[Section titled “AlgoKit Tools and Versions”](#algokit-tools-and-versions)

While AlgoKit as a *collection* was bumped to Version 3.0 on March 26, 2025, it is important to note that the individual tools in the kit are on different package version numbers. In the future this may be changed to epoch versioning so that it is clear that all packages are part of the same epoch release.

| Tool | Repository | AlgoKit 3.0 Min Version |

| ------------------------------------------ | ------------------------------- | ----------------------- |

| Command Line Interface (CLI) | algokit-cli | 2.6.0 |

| Utils (Python) | algokit-utils-py | 4.0.0 |

| Utils (TypeScript) | algokit-utils-ts | 9.0.0 |

| Client Generator (Python) | algokit-client-generator-py | 2.1.0 |

| Client Generator (TypeScript) | algokit-client-generator-ts | 5.0.0 |

| Subscriber (Python) | algokit-subscriber-py | 1.0.0 |

| Subscriber (TypeScript) | algokit-subscriber-ts | 3.2.0 |

| Puya Compiler | puya | 4.5.3 |

| Puya Compiler, TypeScript | puya-ts | 1.0.0-beta.58 |

| AVM Unit Testing (Python) | algorand-python-testing | 0.5.0 |

| AVM Unit Testing (TypeScript) | algorand-typescript-testing | 1.0.0-beta.30 |

| Lora the Explorer | algokit-lora | 1.2.0 |

| AVM VSCode Debugger | algokit-avm-vscode-debugger | 1.1.5 |

| Utils Add-On for TypeScript Debugging | algokit-utils-ts-debug | 1.0.4 |

| Base Project Template | algokit-base-template | 1.1.0 |

| Python Smart Contract Project Template | algokit-python-template | 1.6.0 |

| TypeScript Smart Contract Project Template | algokit-typescript-template | 0.3.1 |

| React Vite Frontend Project Template | algokit-react-frontend-template | 1.1.1 |

| Fullstack Project Template | algokit-fullstack-template | 2.1.4 |

## Install

[Section titled “Install”](#install)

Note

Refer to [Troubleshooting](#troubleshooting) for more details on mitigation of known edge cases when installing AlgoKit.

### Prerequisites

[Section titled “Prerequisites”](#prerequisites)

The installation pre-requisites change depending on the method you use to install. Please refer to [Installation Methods](#installation-methods).

Depending on the features you choose to leverage from the AlgoKit CLI, additional dependencies may be required. The AlgoKit CLI will tell you if you are missing one for a given command. These optional dependencies are:

* **Git**: Essential for creating and updating projects from templates. Installation guide available at [Git Installation](https://git-scm.com/book/en/v2/Getting-Started-Installing-Git).

* **Docker**: Necessary for running the AlgoKit LocalNet environment. Docker Compose version 2.5.0 or higher is required. See [Docker Installation](https://docs.docker.com/get-docker/).

* **Python**: For those installing the AlgoKit CLI via `pipx` or building contracts using Algorand Python. **Minimum required version is Python 3.12+ when working with Algorand Python**. See [Python Installation](https://www.python.org/downloads/).

* **Node.js**: For those working on frontend templates or building contracts using Algorand TypeScript or TEALScript. **Minimum required versions are Node.js `v22` and npm `v10`**. See [Node.js Installation](https://nodejs.org/en/download/).

Note

If you have previously installed AlgoKit using `pipx` and would like to switch to a different installation method, please ensure that you first uninstall the existing version by running `pipx uninstall algokit`. Once uninstalled, you can follow the installation instructions for your preferred platform.

### Cross-platform installation

[Section titled “Cross-platform installation”](#cross-platform-installation)

AlgoKit can be installed using OS specific package managers, or using the python tool [pipx](https://pypa.github.io/pipx/). See below for specific installation instructions.

#### Installation Methods

[Section titled “Installation Methods”](#installation-methods)

* [Windows](#install-algokit-on-windows)

* [Mac](#install-algokit-on-mac)

* [Linux](#install-algokit-on-linux)

* [Universal via pipx](#install-algokit-with-pipx-on-any-os)

### Install AlgoKit on Windows

[Section titled “Install AlgoKit on Windows”](#install-algokit-on-windows)

Note

AlgoKit is supported on Windows 10 1709 (build 16299) and later. We only publish an x64 binary, however it also runs on ARM devices by default using the built in x64 emulation feature.

1. Ensure prerequisites are installed

* [WinGet](https://learn.microsoft.com/en-us/windows/package-manager/winget/) (should be installed by default on recent Windows 10 or later)

* [Git](https://github.com/git-guides/install-git#install-git-on-windows) (or `winget install git.git`)

* [Docker](https://docs.docker.com/desktop/install/windows-install/) (or `winget install docker.dockerdesktop`)

Note

See [our LocalNet documentation](https://github.com/algorandfoundation/algokit-cli/blob/main/docs/features/localnet.md#prerequisites) for more tips on installing Docker on Windows

* [Microsoft C++ Build Tools](https://visualstudio.microsoft.com/visual-cpp-build-tools/)

2. Install using winget

```shell

winget install algokit

```

3. [Verify installation](#verify-installation)

#### Maintenance

[Section titled “Maintenance”](#maintenance)

Some useful commands for updating or removing AlgoKit in the future.

* To update AlgoKit: `winget upgrade algokit`

* To remove AlgoKit: `winget uninstall algokit`

### Install AlgoKit on Mac

[Section titled “Install AlgoKit on Mac”](#install-algokit-on-mac)

Note

AlgoKit is supported on macOS Big Sur (11) and later for both x64 and ARM (Apple Silicon)

1. Ensure prerequisites are installed

* [Homebrew](https://docs.brew.sh/Installation)

* [Git](https://github.com/git-guides/install-git#install-git-on-mac) (should already be available if `brew` is installed)

* [Docker](https://docs.docker.com/desktop/install/mac-install/), (or `brew install --cask docker`)

Note

Docker requires MacOS 11+

1. Install using Homebrew

```shell

brew install algorandfoundation/tap/algokit

```

2. Restart the terminal to ensure AlgoKit is available on the path

3. [Verify installation](#verify-installation)

#### Maintenance

[Section titled “Maintenance”](#maintenance-1)

Some useful commands for updating or removing AlgoKit in the future.

* To update AlgoKit: `brew upgrade algokit`

* To remove AlgoKit: `brew uninstall algokit`

### Install AlgoKit on Linux

[Section titled “Install AlgoKit on Linux”](#install-algokit-on-linux)

Note

AlgoKit is compatible with Ubuntu 16.04 and later, Debian, RedHat, and any distribution that supports [Snap](https://snapcraft.io/docs/installing-snapd), but it is only supported on x64 architecture; ARM is not supported.

1. Ensure prerequisites are installed

* [Snap](https://snapcraft.io/docs/installing-snapd) (should be installed by default on Ubuntu 16.04.4 LTS (Xenial Xerus) or later)

* [Git](https://github.com/git-guides/install-git#install-git-on-linux)

* [Docker](https://docs.docker.com/desktop/install/linux-install/)

1. Install using snap

```shell

sudo snap install algokit --classic

```

> For detailed guidelines per each supported linux distro, refer to [Snap Store](https://snapcraft.io/algokit).

2. [Verify installation](#verify-installation)

#### Maintenance

[Section titled “Maintenance”](#maintenance-2)

Some useful commands for updating or removing AlgoKit in the future.

* To update AlgoKit: `snap refresh algokit`

* To remove AlgoKit: `snap remove --purge algokit`

### Install AlgoKit with pipx on any OS

[Section titled “Install AlgoKit with pipx on any OS”](#install-algokit-with-pipx-on-any-os)

1. Ensure desired prerequisites are installed

* [Python 3.10+](https://www.python.org/downloads/)

* [pipx](https://pypa.github.io/pipx/installation/)

* [Git](https://github.com/git-guides/install-git)

* [Docker](https://docs.docker.com/get-docker/)

1. Install using pipx

```shell

pipx install algokit

```

2. Restart the terminal to ensure AlgoKit is available on the path

3. [Verify installation](#verify-installation)

#### Maintenance

[Section titled “Maintenance”](#maintenance-3)

Some useful commands for updating or removing AlgoKit in the future.

* To update AlgoKit: `pipx upgrade algokit`

* To remove AlgoKit: `pipx uninstall algokit`

### Verify installation

[Section titled “Verify installation”](#verify-installation)

Verify AlgoKit is installed correctly by running `algokit --version` and you should see output similar to:

```plaintext

algokit, version 1.0.1

```

Note

If you get receive one of the following errors:

* `command not found: algokit` (bash/zsh)

* `The term 'algokit' is not recognized as the name of a cmdlet, function, script file, or operable program.` (PowerShell)

Then ensure that `algokit` is available on the PATH by running `pipx ensurepath` and restarting the terminal.

It is also recommended that you run `algokit doctor` to verify there are no issues in your local environment and to diagnose any problems if you do have difficulties running AlgoKit. The output of this command will look similar to:

```plaintext

timestamp: 2023-03-27T01:23:45+00:00

AlgoKit: 1.0.1

AlgoKit Python: 3.11.1 (main, Dec 23 2022, 09:28:24) [Clang 14.0.0 (clang-1400.0.29.202)] (location: /Users/algokit/.local/pipx/venvs/algokit)

OS: macOS-13.1-arm64-arm-64bit

docker: 20.10.21

docker compose: 2.13.0

git: 2.37.1

python: 3.10.9 (location: /opt/homebrew/bin/python)

python3: 3.10.9 (location: /opt/homebrew/bin/python3)

pipx: 1.1.0

poetry: 1.3.2

node: 18.12.1

npm: 8.19.2

brew: 3.6.18

If you are experiencing a problem with AlgoKit, feel free to submit an issue via:

https://github.com/algorandfoundation/algokit-cli/issues/new

Please include this output, if you want to populate this message in your clipboard, run `algokit doctor -c`

```

Per the above output, the doctor command output is a helpful tool if you need to ask for support or [raise an issue](https://github.com/algorandfoundation/algokit-cli/issues/new).

### Troubleshooting

[Section titled “Troubleshooting”](#troubleshooting)

This section addresses specific edge cases and issues that some users might encounter when interacting with the CLI. The following table provides solutions to known edge cases:

| Issue Description | OS(s) with observed behaviour | Steps to mitigate | References |

| --------------------------------------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ----------------------------------------------------------------- |

| This scenario may arise if installed `python` was build without `--with-ssl` flag enabled, causing pip to fail when trying to install dependencies. | Debian 12 | Run `sudo apt-get install -y libssl-dev` to install the required openssl dependency. Afterwards, ensure to reinstall python with `--with-ssl` flag enabled. This includes options like [building python from source code](https://medium.com/@enahwe/how-to-06bc8a042345) or using tools like [pyenv](https://github.com/pyenv/pyenv). | [GitHub Issue](https://github.com/actions/setup-python/issues/93) |

| `poetry install` invoked directly or via `algokit project bootstrap all` fails on `Could NOT find PkgConfig (missing: PKG_CONFIG_EXECUTABLE)`. | `MacOS` >=14 using `python` 3.13 installed via `homebrew` | Install dependencies deprecated in `3.13` and latest MacOS versions via `brew install pkg-config`, delete the virtual environment folder and retry the `poetry install` command invocation. | N/A |

# AVM Debugger

> Tutorial on how to debug a smart contract using AVM Debugger

The AVM VSCode debugger enables inspection of blockchain logic through `Simulate Traces` - JSON files containing detailed transaction execution data without on-chain deployment. The extension requires both trace files and source maps that link original code (TEAL or Puya) to compiled instructions. While the extension works independently, projects created with algokit templates include utilities that automatically generate these debugging artifacts. For full list of available capabilities of debugger extension refer to this [documentation](https://github.com/microsoft/vscode-mock-debug).

This tutorial demonstrates the workflow using a Python-based Algorand project. We will walk through identifying and fixing a bug in an Algorand smart contract using the Algorand Virtual Machine (AVM) Debugger. We’ll start with a simple, smart contract containing a deliberate bug, and by using the AVM Debugger, we’ll pinpoint and fix the issue. This guide will walk you through setting up, debugging, and fixing a smart contract using this extension.

[Debugging with AlgoKit 3.0](https://www.youtube.com/embed/yPWRlmmTSHA?rel=0)

## Prerequisites

[Section titled “Prerequisites”](#prerequisites)

* Visual Studio Code (version 1.80.0 or higher)

* Node.js (version 18.x or higher)

* [algokit-cli](/algokit/algokit-intro) installed

* [Algokit AVM VSCode Debugger](https://github.com/microsoft/vscode-mock-debug) extension installed

* Basic understanding of [Algorand smart contracts using Python](/concepts/smart-contracts/languages/python)

Note

The extension is designed to debug both raw TEAL sourcemaps and sourcemaps generated via Puya compiler on the Algorand Virtual Machine. It provides a step-by-step debugging experience by utilizing transaction execution traces and compiled source maps of your smart contract.

## Step 1: Setup the Debugging Environment

[Section titled “Step 1: Setup the Debugging Environment”](#step-1-setup-the-debugging-environment)

Install the Algokit AVM VSCode Debugger extension from the VSCode Marketplace by going to extensions in VSCode, then search for Algokit AVM Debugger and click install. You should see the output like the following:

## Step 2: Set Up the Example Smart Contract

[Section titled “Step 2: Set Up the Example Smart Contract”](#step-2-set-up-the-example-smart-contract)

We aim to debug smart contract code in a project generated via `algokit init`. Refer to set up [Algokit](/algokit/algokit-intro). Here’s the Algorand Python code for an `tictactoe` smart contract. The bug is in the `move` method, where `games_played` is updated by `2` for guest and `1` for host (which should be updated by 1 for both guest and host).

Remove `hello_world` folder Create a new tic tac toe smart contract starter via `algokit generate smart-contract -a contract_name "TicTacToe"` Replace the content of `contract.py` with the code below.

* Python

```py

# pyright: reportMissingModuleSource=false

from typing import Literal, Tuple, TypeAlias

from algopy import (

ARC4Contract,

BoxMap,

Global,

LocalState,

OnCompleteAction,

Txn,

UInt64,

arc4,

gtxn,

itxn,

op,

subroutine,

urange,

)

Board: TypeAlias = arc4.StaticArray[arc4.Byte, Literal[9]]

HOST_MARK = 1

GUEST_MARK = 2

class GameState(arc4.Struct, kw_only=True):

board: Board

host: arc4.Address

guest: arc4.Address

is_over: arc4.Bool

turns: arc4.UInt8

class TicTacToe(ARC4Contract):

def __init__(self) -> None:

self.id_counter = UInt64(0)

self.games_played = LocalState(UInt64)

self.games_won = LocalState(UInt64)

self.games = BoxMap(UInt64, GameState)

@subroutine

def opt_in(self) -> None:

self.games_played[Txn.sender] = UInt64(0)

self.games_won[Txn.sender] = UInt64(0)

@arc4.abimethod(allow_actions=[OnCompleteAction.NoOp, OnCompleteAction.OptIn])

def new_game(self, mbr: gtxn.PaymentTransaction) -> UInt64:

if Txn.on_completion == OnCompleteAction.OptIn:

self.opt_in()

self.id_counter += 1

assert mbr.receiver == Global.current_application_address

pre_new_game_box, exists = op.AcctParamsGet.acct_min_balance(

Global.current_application_address

)

assert exists

self.games[self.id_counter] = GameState(

board=arc4.StaticArray[arc4.Byte, Literal[9]].from_bytes(op.bzero(9)),

host=arc4.Address(Txn.sender),

guest=arc4.Address(),

is_over=arc4.Bool(False), # noqa: FBT003

turns=arc4.UInt8(),

)

post_new_game_box, exists = op.AcctParamsGet.acct_min_balance(

Global.current_application_address

)

assert exists

assert mbr.amount == (post_new_game_box - pre_new_game_box)

return self.id_counter

@arc4.abimethod

def delete_game(self, game_id: UInt64) -> None:

game = self.games[game_id].copy()

assert game.guest == arc4.Address() or game.is_over.native

assert Txn.sender == self.games[game_id].host.native

pre_del_box, exists = op.AcctParamsGet.acct_min_balance(

Global.current_application_address

)

assert exists

del self.games[game_id]

post_del_box, exists = op.AcctParamsGet.acct_min_balance(

Global.current_application_address

)

assert exists

itxn.Payment(

receiver=game.host.native, amount=pre_del_box - post_del_box

).submit()

@arc4.abimethod(allow_actions=[OnCompleteAction.NoOp, OnCompleteAction.OptIn])

def join(self, game_id: UInt64) -> None:

if Txn.on_completion == OnCompleteAction.OptIn:

self.opt_in()

assert self.games[game_id].host.native != Txn.sender

assert self.games[game_id].guest == arc4.Address()

self.games[game_id].guest = arc4.Address(Txn.sender)

@arc4.abimethod

def move(self, game_id: UInt64, x: UInt64, y: UInt64) -> None:

game = self.games[game_id].copy()

assert not game.is_over.native

assert game.board[self.coord_to_matrix_index(x, y)] == arc4.Byte()

assert Txn.sender == game.host.native or Txn.sender == game.guest.native

is_host = Txn.sender == game.host.native

if is_host:

assert game.turns.native % 2 == 0

self.games[game_id].board[self.coord_to_matrix_index(x, y)] = arc4.Byte(

HOST_MARK

)

else:

assert game.turns.native % 2 == 1

self.games[game_id].board[self.coord_to_matrix_index(x, y)] = arc4.Byte(

GUEST_MARK

)

self.games[game_id].turns = arc4.UInt8(

self.games[game_id].turns.native + UInt64(1)

)

is_over, is_draw = self.is_game_over(self.games[game_id].board.copy())

if is_over:

self.games[game_id].is_over = arc4.Bool(True)

self.games_played[game.host.native] += UInt64(1)

self.games_played[game.guest.native] += UInt64(2) # incorrect code here

if not is_draw:

winner = game.host if is_host else game.guest

self.games_won[winner.native] += UInt64(1)

@arc4.baremethod(allow_actions=[OnCompleteAction.CloseOut])

def close_out(self) -> None:

pass

@subroutine

def coord_to_matrix_index(self, x: UInt64, y: UInt64) -> UInt64:

return 3 * y + x

@subroutine

def is_game_over(self, board: Board) -> Tuple[bool, bool]:

for i in urange(3):

# Row check

if board[3 * i] == board[3 * i + 1] == board[3 * i + 2] != arc4.Byte():

return True, False

# Column check

if board[i] == board[i + 3] == board[i + 6] != arc4.Byte():

return True, False

# Diagonal check

if board[0] == board[4] == board[8] != arc4.Byte():

return True, False

if board[2] == board[4] == board[6] != arc4.Byte():

return True, False

# Draw check

if (

board[0]

== board[1]

== board[2]

== board[3]

== board[4]

== board[5]

== board[6]

== board[7]

== board[8]

!= arc4.Byte()

):

return True, True

return False, False

```

Add the below deployment code in `deploy.config` file:

* Python

```py

import logging

import algokit_utils

from algosdk.v2client.algod import AlgodClient

from algosdk.v2client.indexer import IndexerClient

from algokit_utils import (

EnsureBalanceParameters,

TransactionParameters,

ensure_funded,

)

from algokit_utils.beta.algorand_client import AlgorandClient

import base64

import algosdk.abi

from algokit_utils import (

EnsureBalanceParameters,

TransactionParameters,

ensure_funded,

)

from algokit_utils.beta.algorand_client import AlgorandClient

from algokit_utils.beta.client_manager import AlgoSdkClients

from algokit_utils.beta.composer import PayParams

from algosdk.atomic_transaction_composer import TransactionWithSigner

from algosdk.util import algos_to_microalgos

from algosdk.v2client.algod import AlgodClient

from algosdk.v2client.indexer import IndexerClient

logger = logging.getLogger(__name__)

# define deployment behaviour based on supplied app spec

def deploy(

algod_client: AlgodClient,

indexer_client: IndexerClient,

app_spec: algokit_utils.ApplicationSpecification,

deployer: algokit_utils.Account,

) -> None:

from smart_contracts.artifacts.tictactoe.tic_tac_toe_client import (

TicTacToeClient,

)

app_client = TicTacToeClient(

algod_client,

creator=deployer,

indexer_client=indexer_client,

)

app_client.deploy(

on_schema_break=algokit_utils.OnSchemaBreak.AppendApp,

on_update=algokit_utils.OnUpdate.AppendApp,

)

last_game_id = app_client.get_global_state().id_counter

algorand = AlgorandClient.from_clients(AlgoSdkClients(algod_client, indexer_client))

algorand.set_suggested_params_timeout(0)

host = algorand.account.random()

ensure_funded(

algorand.client.algod,

EnsureBalanceParameters(

account_to_fund=host.address,

min_spending_balance_micro_algos=algos_to_microalgos(200_000),

),

)

print(f"balance of host address: ",algod_client.account_info(host.address)["amount"]);

print(f"host address: ",host.address);

ensure_funded(

algorand.client.algod,

EnsureBalanceParameters(

account_to_fund=app_client.app_address,

min_spending_balance_micro_algos=algos_to_microalgos(10_000),

),

)

print(f"app_client address: ",app_client.app_address);

game_id = app_client.opt_in_new_game(

mbr=TransactionWithSigner(

txn=algorand.transactions.payment(

PayParams(

sender=host.address,

receiver=app_client.app_address,

amount=2_500 + 400 * (5 + 8 + 75),

)

),

signer=host.signer,

),

transaction_parameters=TransactionParameters(

signer=host.signer,

sender=host.address,

boxes=[(0, b"games" + (last_game_id + 1).to_bytes(8, "big"))],

),

)

guest = algorand.account.random()

ensure_funded(

algorand.client.algod,

EnsureBalanceParameters(

account_to_fund=guest.address,

min_spending_balance_micro_algos=algos_to_microalgos(10),

),

)

app_client.opt_in_join(

game_id=game_id.return_value,

transaction_parameters=TransactionParameters(

signer=guest.signer,

sender=guest.address,

boxes=[(0, b"games" + game_id.return_value.to_bytes(8, "big"))],

),

)

moves = [

((0, 0), (2, 2)),

((1, 1), (2, 1)),

((0, 2), (2, 0)),

]

for host_move, guest_move in moves:

app_client.move(

game_id=game_id.return_value,

x=host_move[0],

y=host_move[1],

transaction_parameters=TransactionParameters(

signer=host.signer,

sender=host.address,

boxes=[(0, b"games" + game_id.return_value.to_bytes(8, "big"))],

accounts=[guest.address],

),

)

# app_client.join(game_id=game_id.return_value)

app_client.move(

game_id=game_id.return_value,

x=guest_move[0],

y=guest_move[1],

transaction_parameters=TransactionParameters(

signer=guest.signer,

sender=guest.address,

boxes=[(0, b"games" + game_id.return_value.to_bytes(8, "big"))],

accounts=[host.address],

),

)

game_state = algosdk.abi.TupleType(

[

algosdk.abi.ArrayStaticType(algosdk.abi.ByteType(), 9),

algosdk.abi.AddressType(),

algosdk.abi.AddressType(),

algosdk.abi.BoolType(),

algosdk.abi.UintType(8),

]

).decode(

base64.b64decode(

algorand.client.algod.application_box_by_name(

app_client.app_id, box_name=b"games" + game_id.return_value.to_bytes(8, "big")

)["value"]

)

)

assert game_state[3]

```

## Step 3: Compile & Deploy the Smart Contract

[Section titled “Step 3: Compile & Deploy the Smart Contract”](#step-3-compile--deploy-the-smart-contract)

To enable debugging mode and full tracing for each step in the execution, go to `main.py` file and add:

```python

from algokit_utils.config import config

config.configure(debug=True, trace_all=True)

```

For more details, refer to [Debugger](/docs/algokit-utils/python/latest/concepts/advanced/debugging):

Next compile the smart contract using AlgoKit:

```bash

algokit project run build

```

This will generate the following files in artifacts: `approval.teal`, `clear.teal`, `clear.puya.map`, `approval.puya.map` and `arc32.json` files. The `.puya.map` files are result of the execution of puyapy compiler (which project run build command orchestrated and invokes automatically). The compiler has an option called `--output-source-maps` which is enabled by default.

Deploy the smart contract on localnet:

```bash

algokit project deploy localnet

```

This will automatically generate `*.appln.trace.avm.json` files in `debug_traces` folder, `.teal` and `.teal.map` files in sources.

The `.teal.map` files are source maps for TEAL and those are automatically generated every time an app is deployed via `algokit-utils`. Even if the developer is only interested in debugging puya source maps, the teal source maps would also always be available as a backup in case there is a need to fall back to more lower level source map.

### Expected Behavior

[Section titled “Expected Behavior”](#expected-behavior)

The expected behavior is that `games_played` should be updated by `1` for both guest and host

### Bug

[Section titled “Bug”](#bug)

When `move` method is called, `games_played` will get updated incorrectly for guest player.

## Step 4: Start the debugger

[Section titled “Step 4: Start the debugger”](#step-4-start-the-debugger)

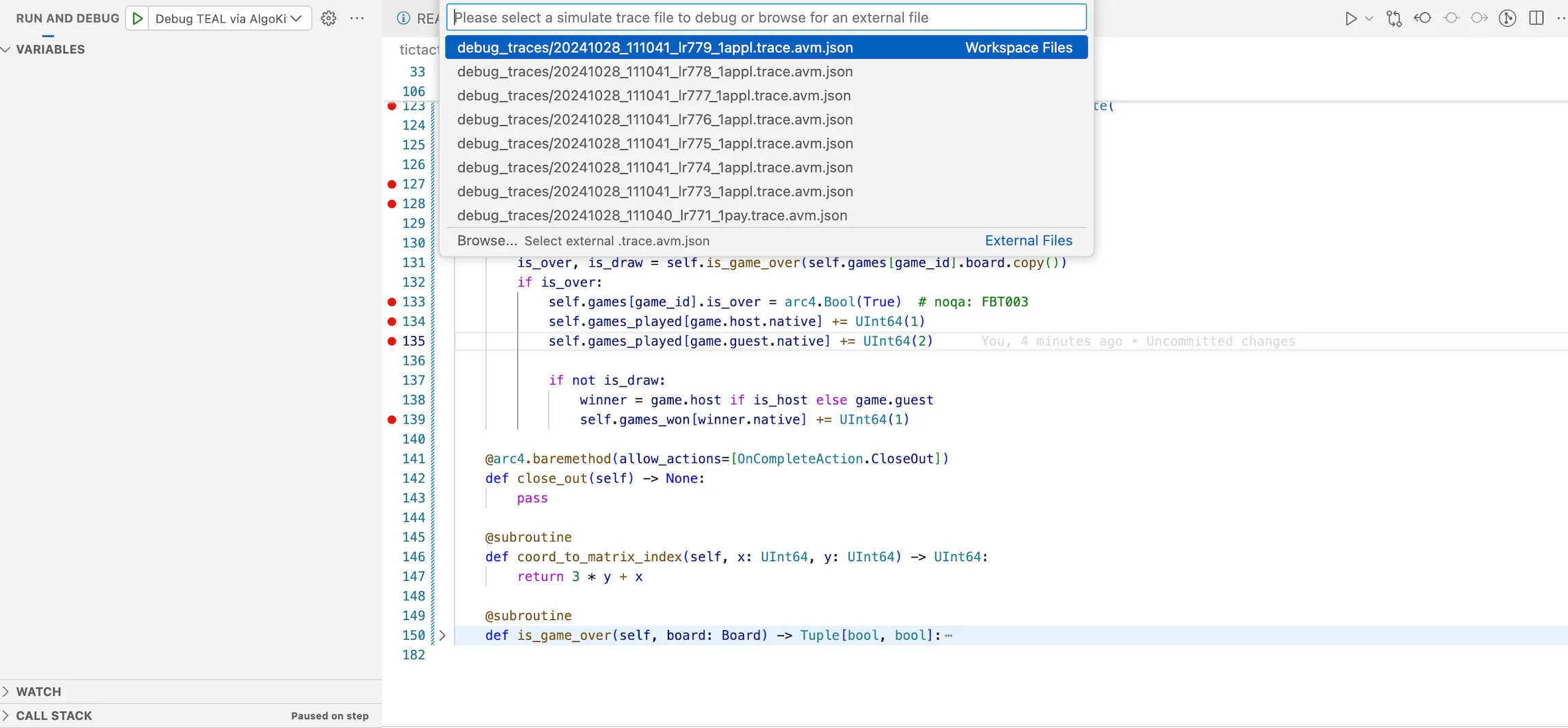

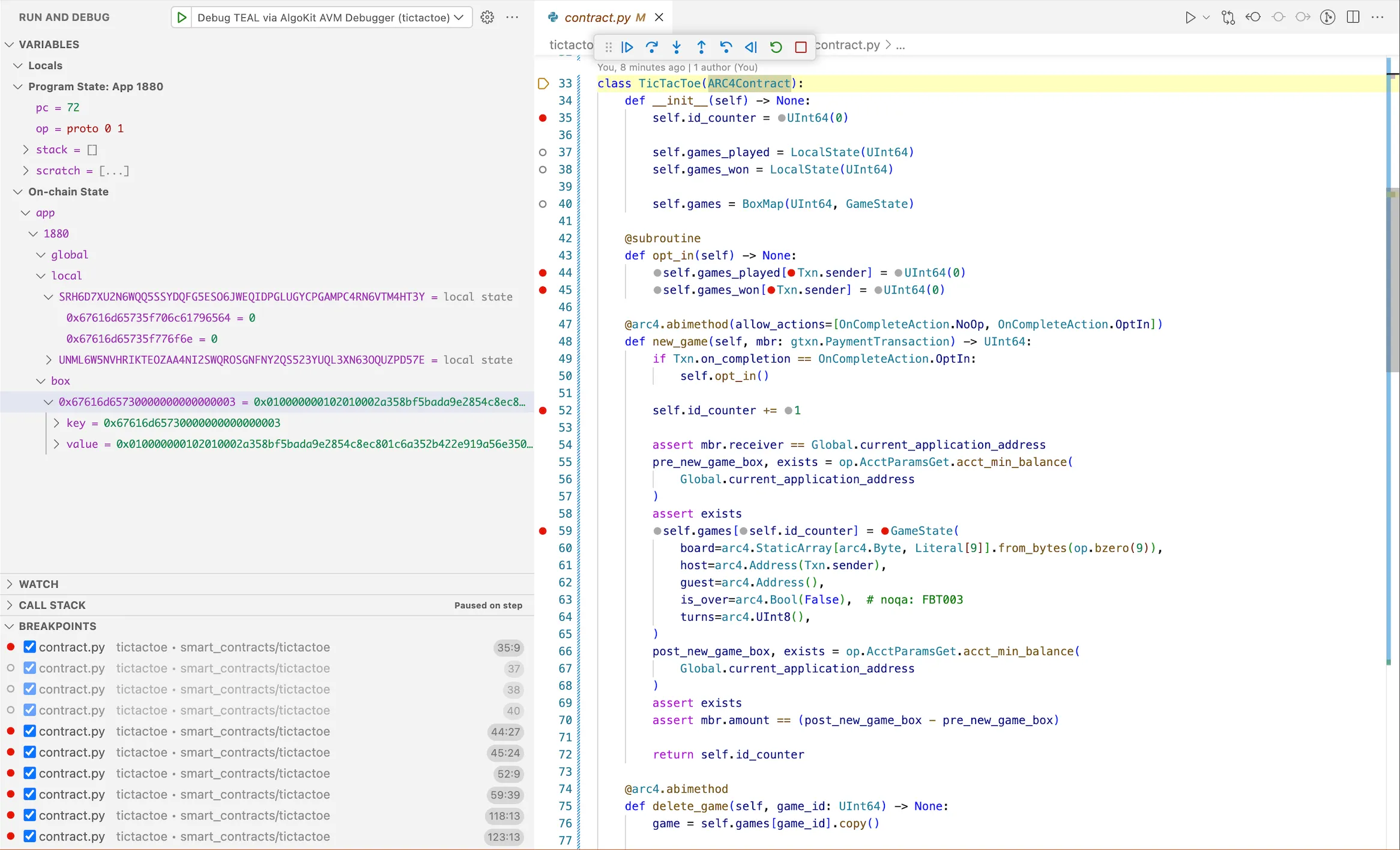

In the VSCode, go to run and debug on left side. This will load the compiled smart contract into the debugger. In the run and debug, select debug TEAL via Algokit AVM Debugger. It will ask to select the appropriate `debug_traces` file.

Note

This vscode launch config is pre bundled with the template. And there is also an alternative execution option where a developer needs to open the json file representing the trace they want to debug and click on the debug button on the top right corner (which will appear specifically on trace json files when extension is installed).

Figure: Load Debugger in VSCode

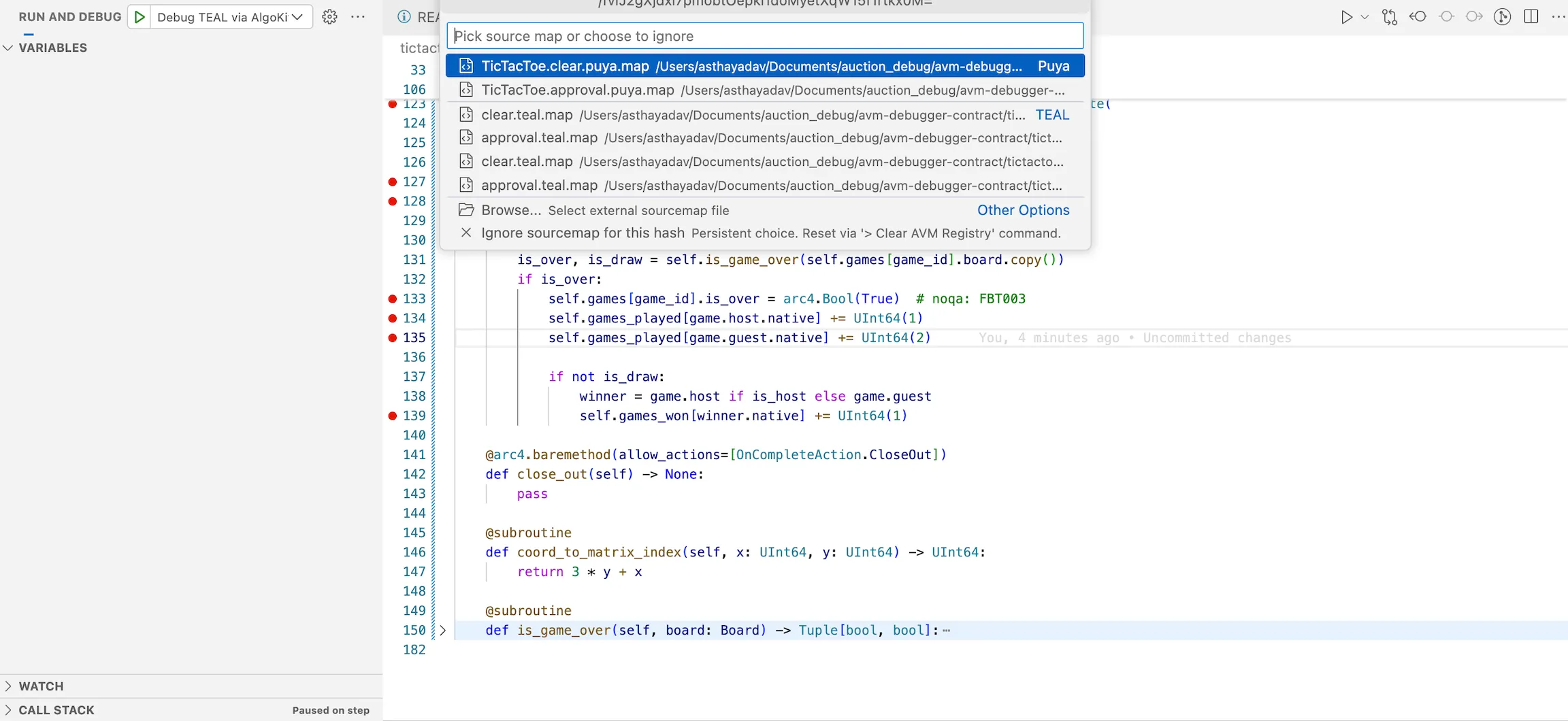

Next it will ask you to select the source map file. Select the `approval.puya.map` file. Which would indicate to the debug extension that you would like to debug the given trace file using Puya sourcemaps, allowing you to step through high level python code. If you need to change the debugger to use TEAL or puya sourcemaps for other frontends such as Typescript, remove the individual record from `.algokit/sources/sources.avm.json` file or run the [debugger commands via VSCode command palette](https://github.com/algorandfoundation/algokit-avm-vscode-debugger#vscode-commands)

## Step 5: Debugging the smart contract

[Section titled “Step 5: Debugging the smart contract”](#step-5-debugging-the-smart-contract)

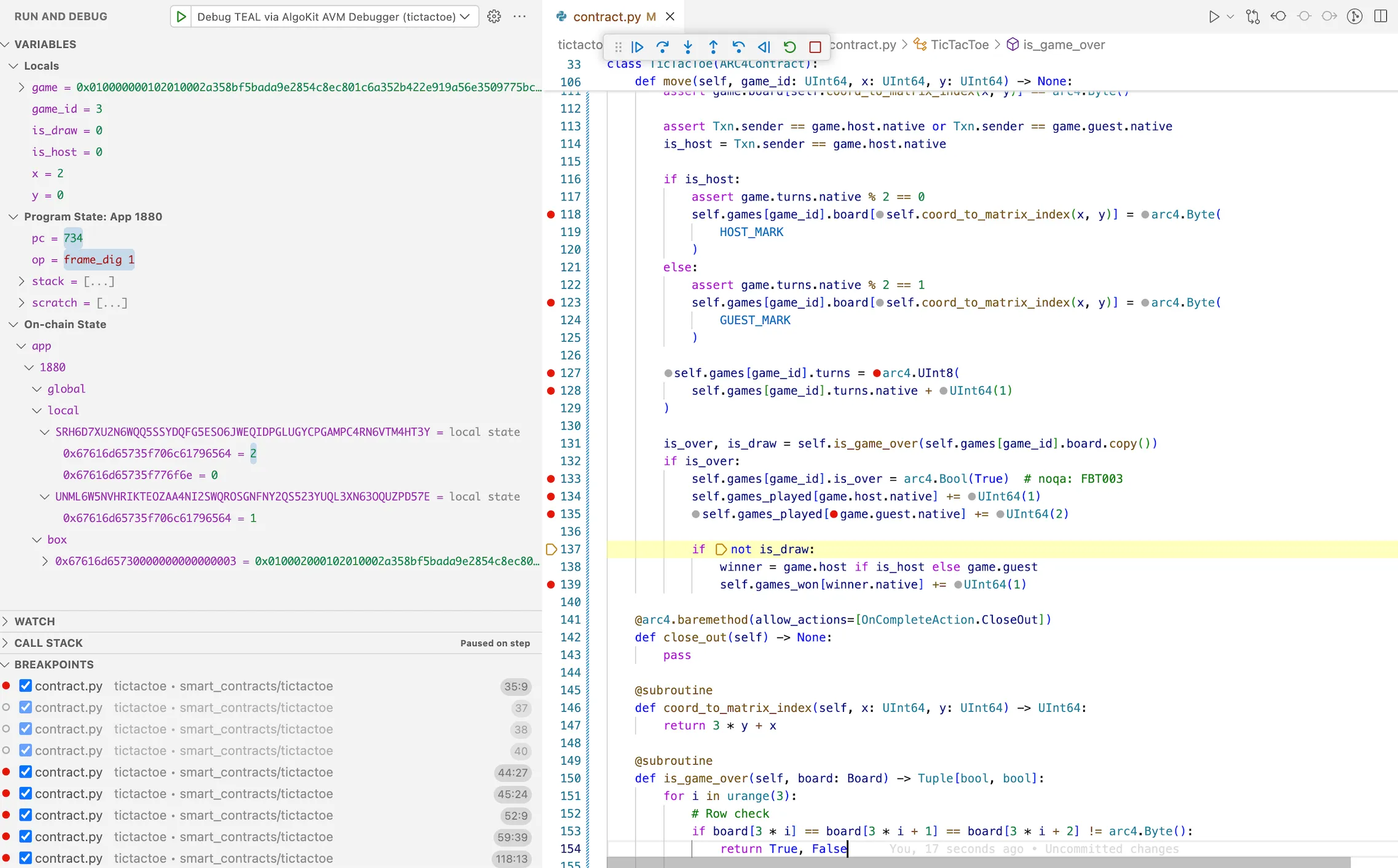

Let’s now debug the issue:

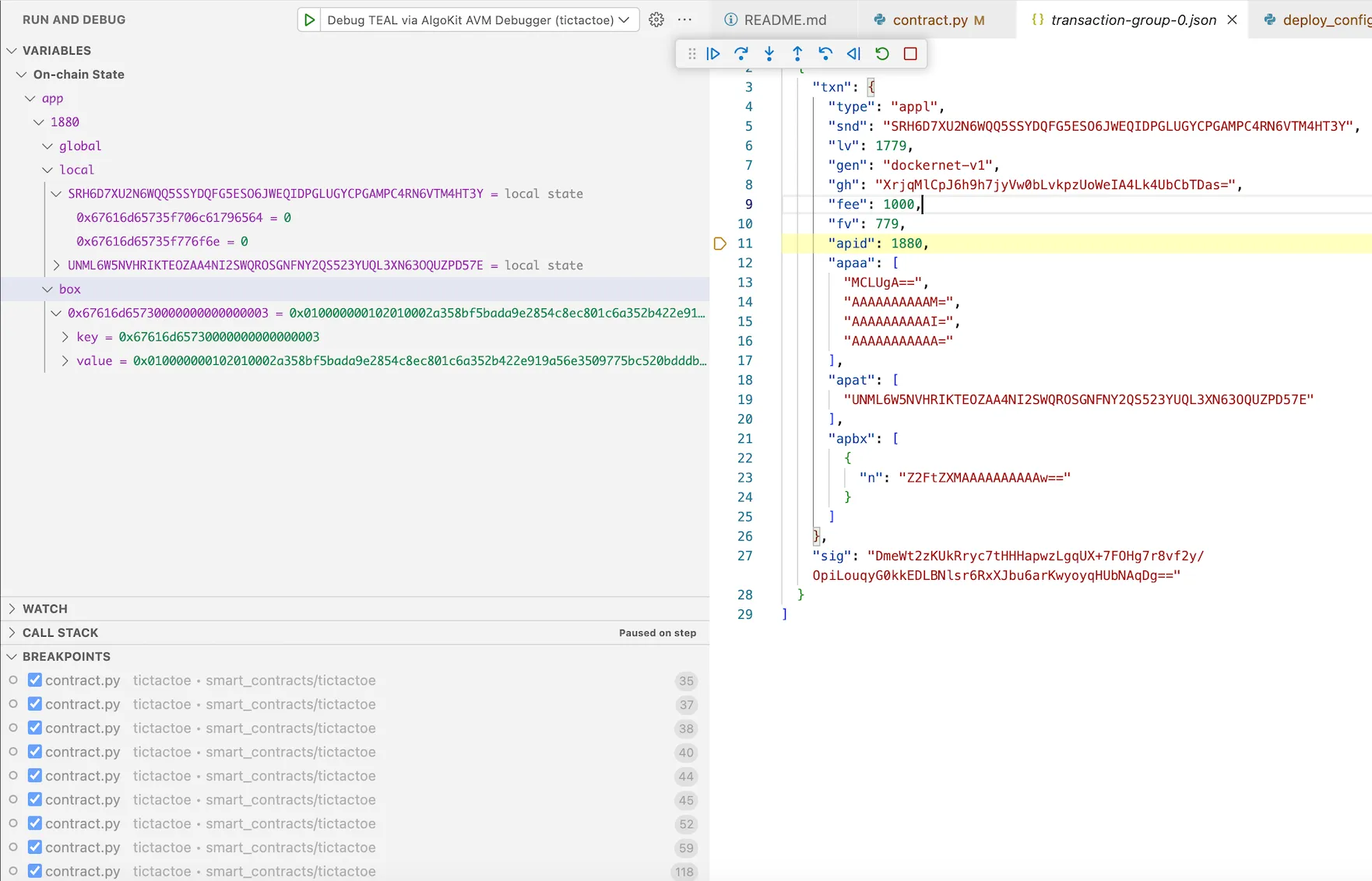

Enter into the `app_id` of the `transaction_group.json` file. This opens the contract. Set a breakpoint in the `move` method. You can also add additional breakpoints.

On left side, you can see `Program State` which includes `program counter`, `opcode`, `stack`, `scratch space`. In `On-chain State` you will be able to see `global`, `local` and `box` storages for the application id deployed on localnet.

:::note: We have used localnet but the contracts can be deployed on any other network. A trace file is in a sense agnostic of the network in which the trace file was generated in. As long as its a complete simulate trace that contains state, stack and scratch states in the execution trace - debugger will work just fine with those as well. :::

Once you start step operations of debugging, it will get populated according to the contract. Now you can step-into the code.

## Step 6: Analyze the Output

[Section titled “Step 6: Analyze the Output”](#step-6-analyze-the-output)

Observe the `games_played` variable for guest is increased by 2 (incorrectly) whereas for host is increased correctly.

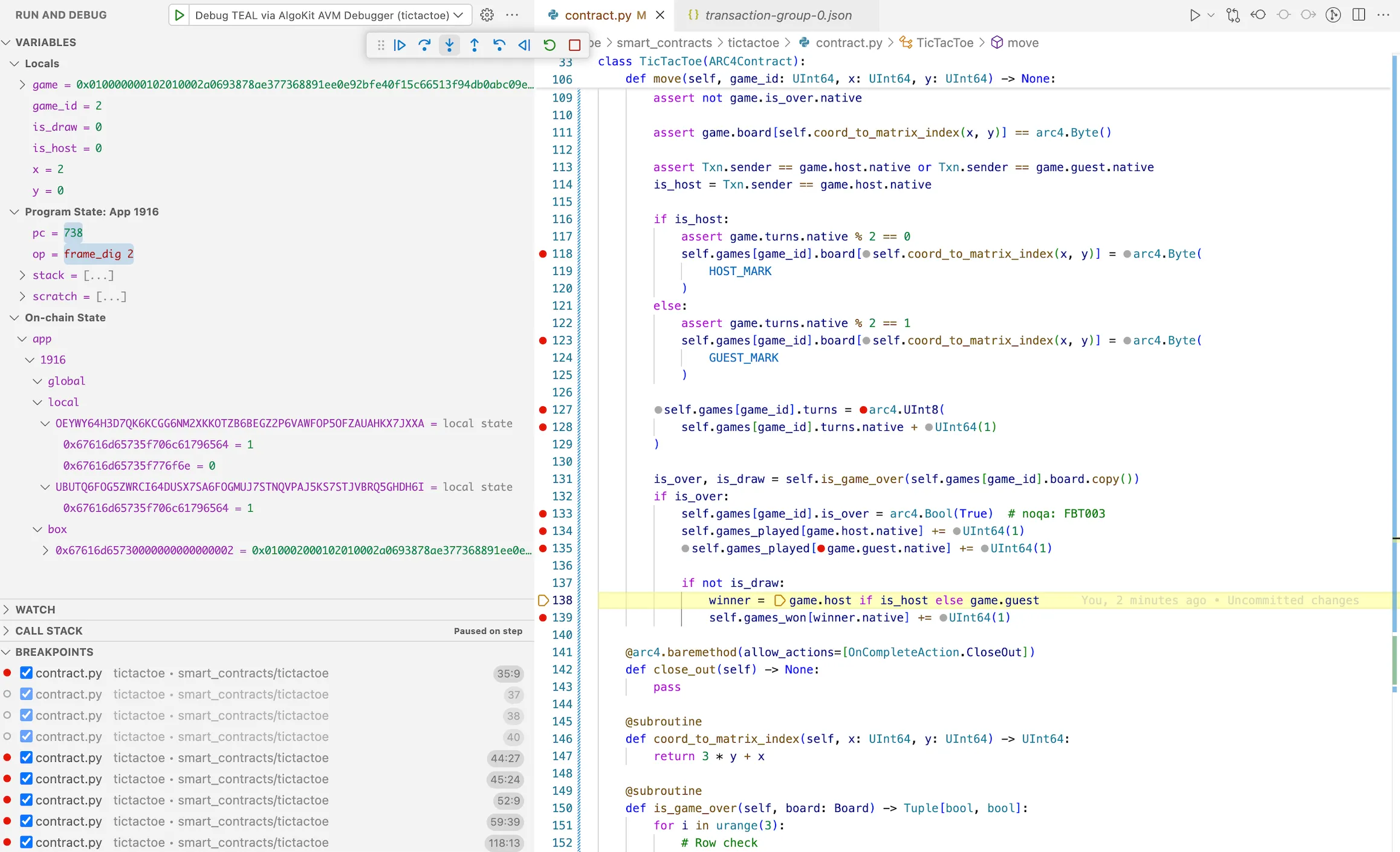

## Step 7: Fix the Bug

[Section titled “Step 7: Fix the Bug”](#step-7-fix-the-bug)

Now that we’ve identified the bug, let’s fix it in our original smart contract in `move` method:

* Python

```py

@arc4.abimethod

def move(self, game_id: UInt64, x: UInt64, y: UInt64) -> None:

game = self.games[game_id].copy()

assert not game.is_over.native

assert game.board[self.coord_to_matrix_index(x, y)] == arc4.Byte()

assert Txn.sender == game.host.native or Txn.sender == game.guest.native

is_host = Txn.sender == game.host.native

if is_host:

assert game.turns.native % 2 == 0

self.games[game_id].board[self.coord_to_matrix_index(x, y)] = arc4.Byte(

HOST_MARK

)

else:

assert game.turns.native % 2 == 1

self.games[game_id].board[self.coord_to_matrix_index(x, y)] = arc4.Byte(

GUEST_MARK

)

self.games[game_id].turns = arc4.UInt8(self.games[game_id].turns.native + UInt64(1))

is_over, is_draw = self.is_game_over(self.games[game_id].board.copy())

if is_over:

self.games[game_id].is_over = arc4.Bool(True)

self.games_played[game.host.native] += UInt64(1)

self.games_played[game.guest.native] += UInt64(1) # changed here

if not is_draw:

winner = game.host if is_host else game.guest

self.games_won[winner.native] += UInt64(1)

```

## Step 8: Re-deploy

[Section titled “Step 8: Re-deploy”](#step-8-re-deploy)

Re-compile and re-deploy the contract using the `step 3`.

## Step 9: Verify again using Debugger

[Section titled “Step 9: Verify again using Debugger”](#step-9-verify-again-using-debugger)

Reset the `sources.avm.json` file, then restart the debugger selecting `approval.puya.source.map` file. Run through `steps 4 to 6` to verify that the `games_played` now updates as expected, confirming the bug has been fixed as seen below.

Note

You can alternatively also use `approval.teal.map` file instead of puya source map - for a lower-level TEAL debugging session. Refer to [Algokit AVM VSCode Debugger commands ](https://github.com/algorandfoundation/algokit-avm-vscode-debugger#vscode-command)via the VSCode command palette to automate clearing or editing the registry file.

## Summary

[Section titled “Summary”](#summary)

In this tutorial, we walked through the process of using the AVM debugger from AlgoKit Python utils to debug an Algorand Smart Contract. We set up a debugging environment, loaded a smart contract with a planted bug, stepped through the execution, and identified the issue. This process can be invaluable when developing and testing smart contracts on the Algorand blockchain. It’s highly recommended to thoroughly test your smart contracts to ensure they function as expected and prevent costly errors in production before deploying them to the main network.

## Next steps

[Section titled “Next steps”](#next-steps)

To learn more, refer to documentation of the [debugger extension](/docs/algokit-utils/python/latest/concepts/advanced/debugging) to learn more about Debugging session.

# Application Client Usage

After using the cli tool to generate an application client you will end up with a TypeScript file containing several type definitions, an application factory class and an application client class that is named after the target smart contract. For example, if the contract name is `HelloWorldApp` then you will end up with `HelloWorldAppFactory` and `HelloWorldAppClient` classes. The contract name will also be used to prefix a number of other types in the generated file which allows you to generate clients for multiple smart contracts in the one project without ambiguous type names.

> !\[NOTE]

>

> If you are confused about when to use the factory vs client the mental model is: use the client if you know the app ID, use the factory if you don’t know the app ID (deferred knowledge or the instance doesn’t exist yet on the blockchain) or you have multiple app IDs

## Creating an application client instance

[Section titled “Creating an application client instance”](#creating-an-application-client-instance)

The first step to using the factory/client is to create an instance, which can be done via the constructor or more easily via an [`AlgorandClient`](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/algorand-client) instance via `algorand.client.get_typed_app_factory()` and `algorand.client.get_typed_app_client()` (see code examples below).

Once you have an instance, if you want an escape hatch to the [underlying untyped `AppClient` / `AppFactory`](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-client) you can access them as a property:

```python

# Untyped `AppFactory`

untypedFactory = factory.app_factory

# Untyped `AppClient`

untypedClient = client.app_client

```

### Get a factory

[Section titled “Get a factory”](#get-a-factory)

The [app factory](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-client) allows you to create and deploy one or more app instances and to create one or more app clients to interact with those (or other) app instances when you need to create clients for multiple apps.

If you only need a single client for a single, known app then you can skip using the factory and just [use a client](#get-a-client-by-app-id).

```python

# Via AlgorandClient

factory = algorand.client.get_typed_app_factory(HelloWorldAppFactory)

# Or, using the options:

factory_with_optional_params = algorand.client.get_typed_app_factory(

HelloWorldAppFactory,

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenName",

compilation_params={

"deletable": True,

"updatable": False,

"deploy_time_params": {

"VALUE": "1",

},

},

version="2.0",

)

# Or via the constructor

factory = new HelloWorldAppFactory({

algorand,

})

# with options:

factory = new HelloWorldAppFactory({

algorand,

default_sender: "DEFAULTSENDERADDRESS",

app_name: "OverriddenName",

compilation_params={

"deletable": True,

"updatable": False,

"deploy_time_params": {

"VALUE": "1",

},

},

version: "2.0",

})

```

### Get a client by app ID

[Section titled “Get a client by app ID”](#get-a-client-by-app-id)

The typed [app client](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-client) can be retrieved by ID.

You can get one by using a previously created app factory, from an `AlgorandClient` instance and using the constructor:

```python

# Via factory

factory = algorand.client.get_typed_app_factory(HelloWorldAppFactory)

client = factory.get_app_client_by_id({ app_id: 123 })

client_with_optional_params = factory.get_app_client_by_id(

app_id=123,

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

# Can also pass in `approval_source_map`, and `clear_source_map`

)

# Via AlgorandClient

client = algorand.client.get_typed_app_client_by_id(HelloWorldAppClient, app_id=123)

client_with_optional_params = algorand.client.get_typed_app_client_by_id(

HelloWorldAppClient,

app_id=123,

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

# Can also pass in `approval_source_map`, and `clear_source_map`

)

# Via constructor

client = new HelloWorldAppClient(

algorand=algorand,

app_id=123,

)

client_with_optional_params = new HelloWorldAppClient(

algorand=algorand,

app_id=123,

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

# Can also pass in `approval_source_map`, and `clear_source_map`

)

```

### Get a client by creator address and name

[Section titled “Get a client by creator address and name”](#get-a-client-by-creator-address-and-name)

The typed [app client](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-client) can be retrieved by looking up apps by name for the given creator address if they were deployed using [AlgoKit deployment conventions](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-deploy).

You can get one by using a previously created app factory:

```python

factory = algorand.client.get_typed_app_factory(HelloWorldAppFactory)

client = factory.get_app_client_by_creator_and_name(creator_address="CREATORADDRESS")

client_with_optional_params = factory.get_app_client_by_creator_and_name(

creator_address="CREATORADDRESS",

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

# Can also pass in `approval_source_map`, and `clear_source_map`

)

```

Or you can get one using an `AlgorandClient` instance:

```python

client = algorand.client.get_typed_app_client_by_creator_and_name(

HelloWorldAppClient,

creator_address="CREATORADDRESS",

)

client_with_optional_params = algorand.client.get_typed_app_client_by_creator_and_name(

HelloWorldAppClient,

creator_address="CREATORADDRESS",

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

ignore_cache=True,

# Can also pass in `app_lookup_cache`, `approval_source_map`, and `clear_source_map`

)

```

### Get a client by network

[Section titled “Get a client by network”](#get-a-client-by-network)

The typed [app client](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-client) can be retrieved by network using any included network IDs within the ARC-56 app spec for the current network.

You can get one by using a static method on the app client:

```python

client = HelloWorldAppClient.from_network(algorand)

client_with_optional_params = HelloWorldAppClient.from_network(

algorand,

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

# Can also pass in `approval_source_map`, and `clear_source_map`

)

```

Or you can get one using an `AlgorandClient` instance:

```python

client = algorand.client.get_typed_app_client_by_network(HelloWorldAppClient)

client_with_optional_params = algorand.client.get_typed_app_client_by_network(

HelloWorldAppClient,

default_sender="DEFAULTSENDERADDRESS",

app_name="OverriddenAppName",

# Can also pass in `approval_source_map`, and `clear_source_map`

)

```

## Deploying a smart contract (create, update, delete, deploy)

[Section titled “Deploying a smart contract (create, update, delete, deploy)”](#deploying-a-smart-contract-create-update-delete-deploy)

The app factory and client will variously include methods for creating (factory), updating (client), and deleting (client) the smart contract based on the presence of relevant on completion actions and call config values in the ARC-32 / ARC-56 application spec file. If a smart contract does not support being updated for instance, then no update methods will be generated in the client.

In addition, the app factory will also include a `deploy` method which will…

* create the application if it doesn’t already exist

* update or recreate the application if it does exist, but differs from the version the client is built on

* recreate the application (and optionally delete the old version) if the deployed version is incompatible with being updated to the client version

* do nothing in the application is already deployed and up to date.

You can find more specifics of this behaviour in the [algokit-utils](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-deploy) docs.

### Create

[Section titled “Create”](#create)

To create an app you need to use the factory. The return value will include a typed client instance for the created app.

```python

factory = algorand.client.get_typed_app_factory(HelloWorldAppFactory)

# Create the application using a bare call

result, client = factory.send.create.bare()

# Pass in some compilation flags

factory.send.create.bare(compilation_params={

"updatable": True,

"deletable": True,

})

# Create the application using a specific on completion action (ie. not a no_op)

factory.send.create.bare(params=CommonAppFactoryCallParams(on_complete=OnApplicationComplete.OptIn))

# Create the application using an ABI method (ie. not a bare call)

factory.send.create.namedCreate(

args=NamedCreateArgs(

arg1=123,

arg2="foo",

),

)

# Pass compilation flags and on completion actions to an ABI create call

factory.send.create.namedCreate({

args=NamedCreateArgs(

arg1=123,

arg2="foo",

), # Note also available as a typed tuple argument

compilation_params={

"updatable": True,

"deletable": True,

},

params=CommonAppFactoryCallParams(on_complete=OnApplicationComplete.OptIn),

})

```

If you want to get a built transaction without sending it you can use `factory.createTransaction.create...` rather than `factory.send.create...`. If you want to receive transaction parameters ready to pass in as an ABI argument or to an `TransactionComposer` call then you can use `factory.params.create...`.

### Update and Delete calls

[Section titled “Update and Delete calls”](#update-and-delete-calls)

To create an app you need to use the client.

```python

client = algorand.client.get_typed_app_client_by_id(HelloWorldAppClient, app_id=123)

# Update the application using a bare call

client.send.update.bare()

# Pass in compilation flags

client.send.update.bare(compilation_params={

"updatable": True,

"deletable": False,

})

# Update the application using an ABI method

client.send.update.namedUpdate(

args=NamedUpdateArgs(

arg1=123,

arg2="foo",

),

)

# Pass compilation flags

client.send.update.namedUpdate({

args=NamedUpdateArgs(

arg1=123,

arg2="foo",

),

compilation_params={

"updatable": True,

"deletable": True,

},

params=CommonAppCallParams(on_complete=OnApplicationComplete.OptIn),

)

# Delete the application using a bare call

client.send.delete.bare()

# Delete the application using an ABI method

client.send.delete.namedDelete()

```

If you want to get a built transaction without sending it you can use `client.create_transaction.update...` / `client.create_transaction.delete...` rather than `client.send.update...` / `client.send.delete...`. If you want to receive transaction parameters ready to pass in as an ABI argument or to an `TransactionComposer` call then you can use `client.params.update...` / `client.params.delete...`.

### Deploy call

[Section titled “Deploy call”](#deploy-call)

The deploy call will make a create, update, or delete and create, or no call depending on what is required to have the deployed application match the client’s contract version and the configured `on_update` and `on_schema_break` parameters. As such the deploy method allows you to configure arguments for each potential call it may make (via `create_params`, `update_params` and `delete_params`). If the smart contract is not updatable or deletable, those parameters will be omitted.

These params values (`create_params`, `update_params` and `delete_params`) will only allow you to specify valid calls that are defined in the ARC-32 / ARC-56 app spec. You can control what call is made via the `method` parameter in these objects. If it’s left out (or set to `None`) then it will be a bare call, if set to the ABI signature of a call it will perform that ABI call. If there are arguments required for that ABI call then the type of the arguments will automatically populate in intellisense.

```ts

client.deploy({

createParams: {

onComplete: OnApplicationComplete.OptIn,

},

updateParams: {

method: 'named_update(uint64,string)string',

args: {

arg1: 123,

arg2: 'foo',

},

},

// Can leave this out and it will do an argumentless bare call (if that call is allowed)

//deleteParams: {}

allowUpdate: true,

allowDelete: true,

onUpdate: 'update',

onSchemaBreak: 'replace',

});

```

## Opt in and close out

[Section titled “Opt in and close out”](#opt-in-and-close-out)

Methods with an `opt_in` or `close_out` `onCompletionAction` are grouped under properties of the same name within the `send`, `createTransaction` and `params` properties of the client. If the smart contract does not handle one of these on completion actions, it will be omitted.

```python

# Opt in with bare call

client.send.opt_in.bare()

# Opt in with ABI method

client.create_transaction.opt_in.named_opt_in(args=NamedOptInArgs(arg1=123))

# Close out with bare call

client.params.close_out.bare()

# Close out with ABI method

client.send.close_out.named_close_out(args=NamedCloseOutArgs(arg1="foo"))

```

## Clear state

[Section titled “Clear state”](#clear-state)

All clients will have a clear state method which will call the clear state program of the smart contract.

```python

client.send.clear_state()

client.create_transaction.clear_state()

client.params.clear_state()

```

## No-op calls

[Section titled “No-op calls”](#no-op-calls)

The remaining ABI methods which should all have an `on_completion_action` of `OnApplicationComplete.NoOp` will be available on the `send`, `create_transaction` and `params` properties of the client. If a bare no-op call is allowed it will be available via `bare`.

These methods will allow you to optionally pass in `on_complete` and if the method happens to allow other on-completes than no-op these can also be provided (and those methods will also be available via the on-complete sub-property too per above).

```python

# Call an ABI method which takes no args

client.send.some_method()

# Call a no-op bare call

client.create_transaction.bare()

# Call an ABI method, passing args in as a dictionary

client.params.some_other_method({ args: { arg1: 123, arg2: "foo" } })

```

## Method and argument naming

[Section titled “Method and argument naming”](#method-and-argument-naming)

By default, names of names, types and arguments will be transformed to `snake_case` to match Python idiomatic semantics (names of classes would be converted to idiomatic `PascalCase` as per Python conventions). If you want to keep the names the same as what is in the ARC-32 / ARC-56 app spec file then you can pass the `-p` or `--preserve-names` property to the type generator.

### Method name clashes

[Section titled “Method name clashes”](#method-name-clashes)

The ARC-32 / ARC-56 specification allows two methods to have the same name, as long as they have different ABI signatures. On the client these methods will be emitted with a unique name made up of the method’s full signature. Eg. `create_string_uint32_void`.

## ABI arguments

[Section titled “ABI arguments”](#abi-arguments)

Each generated method will accept ABI method call arguments in both a `tuple` and a `dataclass`, so you can use whichever feels more comfortable. The types that are accepted will automatically translate from the specified ABI types in the app spec to an equivalent python type.

```python

# ABI method which takes no args

client.send.no_args_method()

# ABI method with args

client.send.other_method(args=OtherMethodArgs(arg1=123, arg2="foo", arg3=bytes([1, 2, 3, 4])))

# Call an ABI method, passing args in as a tuple

client.send.yet_another_method(args=(1, 2, "foo"))

```

## Structs

[Section titled “Structs”](#structs)

If the method takes a struct as a parameter, or returns a struct as an output then it will automatically be allowed to be passed in and will be returned as the parsed struct object.

## Additional parameters

[Section titled “Additional parameters”](#additional-parameters)

Each ABI method and bare call on the client allows the consumer to provide additional parameters as well as the core method / args / etc. parameters. This models the parameters that are available in the underlying [app factory / client](https://github.com/algorandfoundation/algokit-utils-py/blob/main/docs/markdown/capabilities/app-client).

```python

client.send.some_method(

args=SomeMethodArgs(arg1=123),

# Additional parameters go here

)

client.send.opt_in.bare({

# Additional parameters go here

})

```

## Composing transactions

[Section titled “Composing transactions”](#composing-transactions)

Algorand allows multiple transactions to be composed into a single atomic transaction group to be committed (or rejected) as one.

### Using the fluent composer

[Section titled “Using the fluent composer”](#using-the-fluent-composer)

The client exposes a fluent transaction composer which allows you to build up a group before sending it. The return values will be strongly typed based on the methods you add to the composer.

```python

result = client

.new_group()

.method_one(args=SomeMethodArgs(arg1=123), box_references=["V"])

# Non-ABI transactions can still be added to the group

.add_transaction(

client.app_client.create_transaction.fund_app_account(

FundAppAccountParams(

amount=AlgoAmount.from_micro_algos(5000)

)

)

)

.method_two(args=SomeOtherMethodArgs(arg1="foo"))

.send()

# Strongly typed as the return type of methodOne

result_of_method_one = result.returns[0]

# Strongly typed as the return type of methodTwo

result_of_method_two = result.returns[1]

```

### Manually with the TransactionComposer

[Section titled “Manually with the TransactionComposer”](#manually-with-the-transactioncomposer)

Multiple transactions can also be composed using the `TransactionComposer` class.

```python

result = algorand

.new_group()

.add_app_call_method_call(

client.params.method_one(args=SomeMethodArgs(arg1=123), box_references=["V"])

)

.add_payment(

client.app_client.params.fund_app_account(

FundAppAccountParams(amount=AlgoAmount.from_micro_algos(5000))

)

)

.add_app_call_method_call(client.params.method_two(args=SomeOtherMethodArgs(arg1="foo")))

.send()

# returns will contain a result object for each ABI method call in the transaction group

for (return_value in result.returns) {

print(return_value)

}

```

## State

[Section titled “State”](#state)

You can access local, global and box storage state with any state values that are defined in the ARC-32 / ARC-56 app spec.

You can do this via the `state` property which has 3 sub-properties for the three different kinds of state: `state.global`, `state.local(address)`, `state.box`. Each one then has a series of methods defined for each registered key or map from the app spec.

Maps have a `value(key)` method to get a single value from the map by key and a `getMap()` method to return all box values as a map. Keys have a `{keyName}()` method to get the value for the key and there will also be a `get_all()` method to get an object will all key values.

The properties will return values of the corresponding TypeScript type for the type in the app spec and any structs will be parsed as the struct object.

```python

factory = algorand.client.get_typed_app_factory(Arc56TestFactory, default_sender="SENDER")

result, client = factory.send.create.create_application(

args=[],

compilation_params={"deploy_time_params": {"some_number": 1337}},

)

assert client.state.global_state.global_key() == 1337

assert another_app_client.state.global_state.global_key() == 1338

assert client.state.global_state.global_map.value("foo") == {

foo: 13,

bar: 37,

}

client.appClient.fund_app_account(

FundAppAccountParams(amount=AlgoAmount.from_micro_algos(1_000_000))

)

client.send.opt_in.opt_in_to_application(

args=[],

)

assert client.state.local(defaultSender).local_key() == 1337

assert client.state.local(defaultSender).local_map.value("foo") == "bar"

assert client.state.box.box_key() == "baz"

assert client.state.box.box_map.value({

add: { a: 1, b: 2 },

subtract: { a: 4, b: 3 },

}) == {

sum: 3,

difference: 1,

}

```

# Application Client Usage

After using the cli tool to generate an application client you will end up with a TypeScript file containing several type definitions, an application factory class and an application client class that is named after the target smart contract. For example, if the contract name is `HelloWorldApp` then you will end up with `HelloWorldAppFactory` and `HelloWorldAppClient` classes. The contract name will also be used to prefix a number of other types in the generated file which allows you to generate clients for multiple smart contracts in the one project without ambiguous type names.

> !\[NOTE]

>

> If you are confused about when to use the factory vs client the mental model is: use the client if you know the app ID, use the factory if you don’t know the app ID (deferred knowledge or the instance doesn’t exist yet on the blockchain) or you have multiple app IDs

## Creating an application client instance

[Section titled “Creating an application client instance”](#creating-an-application-client-instance)

The first step to using the factory/client is to create an instance, which can be done via the constructor or more easily via an [`AlgorandClient`](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/algorand-client) instance via `algorand.client.getTypedAppFactory()` and `algorand.client.getTypedAppClient*()` (see code examples below).

Once you have an instance, if you want an escape hatch to the [underlying untyped `AppClient` / `AppFactory`](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-client) you can access them as a property:

```typescript

// Untyped `AppFactory`

const untypedFactory = factory.appFactory;

// Untyped `AppClient`

const untypedClient = client.appClient;

```

### Get a factory

[Section titled “Get a factory”](#get-a-factory)

The [app factory](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-client) allows you to create and deploy one or more app instances and to create one or more app clients to interact with those (or other) app instances when you need to create clients for multiple apps.

If you only need a single client for a single, known app then you can skip using the factory and just [use a client](#get-a-client-by-app-id).

```typescript

// Via AlgorandClient

const factory = algorand.client.getTypedAppFactory(HelloWorldAppFactory);

// Or, using the options:

const factoryWithOptionalParams = algorand.client.getTypedAppFactory(HelloWorldAppFactory, {

defaultSender: 'DEFAULTSENDERADDRESS',

appName: 'OverriddenName',

deletable: true,

updatable: false,

deployTimeParams: {

VALUE: '1',

},

version: '2.0',

});

// Or via the constructor

const factory = new HelloWorldAppFactory({

algorand,

});

// with options:

const factory = new HelloWorldAppFactory({

algorand,

defaultSender: 'DEFAULTSENDERADDRESS',

appName: 'OverriddenName',

deletable: true,

updatable: false,

deployTimeParams: {

VALUE: '1',

},

version: '2.0',

});

```

### Get a client by app ID

[Section titled “Get a client by app ID”](#get-a-client-by-app-id)

The typed [app client](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-client) can be retrieved by ID.

You can get one by using a previously created app factory, from an `AlgorandClient` instance and using the constructor:

```typescript

// Via factory

const factory = algorand.client.getTypedAppFactory(HelloWorldAppFactory);

const client = factory.getAppClientById({ appId: 123n });

const clientWithOptionalParams = factory.getAppClientById({

appId: 123n,

defaultSender: 'DEFAULTSENDERADDRESS',

appName: 'OverriddenAppName',

// Can also pass in `approvalSourceMap`, and `clearSourceMap`

});

// Via AlgorandClient

const client = algorand.client.getTypedAppClientById(HelloWorldAppClient, {

appId: 123n,

});

const clientWithOptionalParams = algorand.client.getTypedAppClientById(HelloWorldAppClient, {

appId: 123n,

defaultSender: 'DEFAULTSENDERADDRESS',

appName: 'OverriddenAppName',

// Can also pass in `approvalSourceMap`, and `clearSourceMap`

});

// Via constructor

const client = new HelloWorldAppClient({

algorand,

appId: 123n,

});

const clientWithOptionalParams = new HelloWorldAppClient({

algorand,

appId: 123n,

defaultSender: 'DEFAULTSENDERADDRESS',

appName: 'OverriddenAppName',

// Can also pass in `approvalSourceMap`, and `clearSourceMap`

});

```

### Get a client by creator address and name

[Section titled “Get a client by creator address and name”](#get-a-client-by-creator-address-and-name)

The typed [app client](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-client) can be retrieved by looking up apps by name for the given creator address if they were deployed using [AlgoKit deployment conventions](https://github.com/algorandfoundation/algokit-utils-ts/blob/main/docs/capabilities/app-deploy).

You can get one by using a previously created app factory:

```typescript

const factory = algorand.client.getTypedAppFactory(HelloWorldAppFactory);